Enabling data persistence in microservices

AWS Prescriptive Guidance

Copyright © 2024 Amazon Web Services, Inc. and/or its affiliates. All rights reserved.

AWS Prescriptive Guidance Enabling data persistence in microservices

AWS Prescriptive Guidance: Enabling data persistence in

microservices

Copyright © 2024 Amazon Web Services, Inc. and/or its affiliates. All rights reserved.

Amazon's trademarks and trade dress may not be used in connection with any product or service

that is not Amazon's, in any manner that is likely to cause confusion among customers, or in any

manner that disparages or discredits Amazon. All other trademarks not owned by Amazon are

the property of their respective owners, who may or may not be affiliated with, connected to, or

sponsored by Amazon.

AWS Prescriptive Guidance Enabling data persistence in microservices

Table of Contents

Introduction ..................................................................................................................................... 1

Targeted business outcomes ...................................................................................................................... 3

Patterns for enabling data persistence .......................................................................................... 4

Database-per-service pattern ..................................................................................................................... 4

API composition pattern ............................................................................................................................. 6

CQRS pattern ................................................................................................................................................. 8

Event sourcing pattern ............................................................................................................................. 11

Amazon Kinesis Data Streams implementation ............................................................................. 12

Amazon EventBridge implementation .............................................................................................. 13

Saga pattern ................................................................................................................................................ 14

Shared-database-per-service pattern .................................................................................................... 16

FAQ ................................................................................................................................................. 18

When can I modernize my monolithic database as part of my modernization journey? ............. 18

Can I keep a legacy monolithic database for multiple microservices? ............................................ 18

What should I consider when designing databases for a microservices architecture? ................. 18

What is a common pattern for maintaining data consistency across different

microservices? ............................................................................................................................................. 18

How do I maintain transaction automation? ....................................................................................... 19

Do I have to use a separate database for each microservice? .......................................................... 19

How can I keep a microservice’s persistent data private if they all share a single database? ...... 19

Resources ........................................................................................................................................ 20

Related guides and patterns .................................................................................................................. 20

Other resources ......................................................................................................................................... 20

Document history .......................................................................................................................... 21

Glossary .......................................................................................................................................... 22

# ..................................................................................................................................................................... 22

A ..................................................................................................................................................................... 23

B ..................................................................................................................................................................... 26

C ..................................................................................................................................................................... 28

D ..................................................................................................................................................................... 31

E ..................................................................................................................................................................... 35

F ..................................................................................................................................................................... 37

G ..................................................................................................................................................................... 38

H ..................................................................................................................................................................... 39

iii

AWS Prescriptive Guidance Enabling data persistence in microservices

I ...................................................................................................................................................................... 40

L ..................................................................................................................................................................... 42

M .................................................................................................................................................................... 43

O .................................................................................................................................................................... 47

P ..................................................................................................................................................................... 50

Q .................................................................................................................................................................... 52

R ..................................................................................................................................................................... 53

S ..................................................................................................................................................................... 55

T ..................................................................................................................................................................... 59

U ..................................................................................................................................................................... 60

V ..................................................................................................................................................................... 61

W .................................................................................................................................................................... 61

Z ..................................................................................................................................................................... 62

iv

AWS Prescriptive Guidance Enabling data persistence in microservices

Enabling data persistence in microservices

Tabby Ward and Balaji Mohan, Amazon Web Services (AWS)

December 2023 (document history)

Organizations constantly seek new processes to create growth opportunities and reduce time

to market. You can increase your organization's agility and efficiency by modernizing your

applications, software, and IT systems. Modernization also helps you deliver faster and better

services to your customers.

Application modernization is a gateway to continuous improvement for your organization, and it

begins by refactoring a monolithic application into a set of independently developed, deployed,

and managed microservices. This process has the following steps:

• Decompose monoliths into microservices – Use patterns to break down monolithic applications

into microservices.

• Integrate microservices – Integrate the newly created microservices into a microservices

architecture by using Amazon Web Services (AWS) serverless services.

• Enable data persistence for microservices architecture – Promote polyglot persistence among

your microservices by decentralizing their data stores.

Although you can use a monolithic application architecture for some use cases, modern application

features often don't work in a monolithic architecture. For example, the entire application

can't remain available while you upgrade individual components, and you can't scale individual

components to resolve bottlenecks or hotspots (relatively dense regions in your application's data).

Monoliths can become large, unmanageable applications, and significant effort and coordination is

required among multiple teams to introduce small changes.

Legacy applications typically use a centralized monolithic database, which makes schema changes

difficult, creates a technology lock-in with vertical scaling as the only way to respond to growth,

and imposes a single point of failure. A monolithic database also prevents you from building

the decentralized and independent components required for implementing a microservices

architecture.

Previously, a typical architectural approach was to model all user requirements in one relational

database that was used by the monolithic application. This approach was supported by popular

1

AWS Prescriptive Guidance Enabling data persistence in microservices

relational database architecture, and application architects usually designed the relational schema

at the earliest stages of the development process, built a highly normalized schema, and then sent

it to the developer team. However, this meant that the database drove the data model for the

application use case, instead of the other way round.

By choosing to decentralize your data stores, you promote polyglot persistence among your

microservices, and identify your data storage technology based on the data access patterns

and other requirements of your microservices. Each microservice has its own data store and

can be independently scaled with low-impact schema changes, and data is gated through the

microservice’s API. Breaking down a monolithic database is not easy, and one of the biggest

challenges is structuring your data to achieve the best possible performance. Decentralized

polyglot persistence also typically results in eventual data consistency, and other potential

challenges that require a thorough evaluation include data synchronization during transactions,

transactional integrity, data duplication, and joins and latency.

This guide is for application owners, business owners, architects, technical leads, and project

managers. The guide provides the following six patterns to enable data persistence among your

microservices:

• Database-per-service pattern

• API composition pattern

• CQRS pattern

• Event sourcing pattern

• Saga pattern

• For steps to implement the saga pattern by using AWS Step Functions, see the pattern

Implement the serverless saga pattern by using AWS Step Functions on the AWS Prescriptive

Guidance website.

• Shared-database-per-service pattern

The guide is part of a content series that covers the application modernization approach

recommended by AWS. The series also includes:

• Strategy for modernizing applications in the AWS Cloud

• Phased approach to modernizing applications in the AWS Cloud

• Evaluating modernization readiness for applications in the AWS Cloud

• Decomposing monoliths into microservices

2

AWS Prescriptive Guidance Enabling data persistence in microservices

• Integrating microservices by using AWS serverless services

Targeted business outcomes

Many organizations find that innovating and improving the user experience is negatively impacted

by monolithic applications, databases, and technologies. Legacy applications and databases reduce

your options for adopting modern technology frameworks, and constrain your competitiveness and

innovation. However, when you modernize applications and their data stores, they become easier

to scale and faster to develop. A decoupled data strategy improves fault tolerance and resiliency,

which helps accelerate the time to market for your new application features.

You should expect the following six outcomes from promoting data persistence among your

microservices:

• Remove legacy monolithic databases from your application portfolio.

• Improve fault tolerance, resiliency, and availability for your applications.

• Shorten your time to market for new application features.

• Reduce your overall licensing expenses and operational costs.

• Take advantage of open-source solutions (for example, MySQL or PostgreSQL).

• Build highly scalable and distributed applications by choosing from more than 15 purpose-built

database engines on the AWS Cloud.

Targeted business outcomes 3

AWS Prescriptive Guidance Enabling data persistence in microservices

Patterns for enabling data persistence

The following patterns are used to enable data persistence in your microservices.

Topics

• Database-per-service pattern

• API composition pattern

• CQRS pattern

• Event sourcing pattern

• Saga pattern

• Shared-database-per-service pattern

Database-per-service pattern

Loose coupling is the core characteristic of a microservices architecture, because each individual

microservice can independently store and retrieve information from its own data store. By

deploying the database-per-service pattern, you choose the most appropriate data stores (for

example, relational or non-relational databases) for your application and business requirements.

This means that microservices don't share a data layer, changes to a microservice's individual

database do not impact other microservices, individual data stores cannot be directly accessed

by other microservices, and persistent data is accessed only by APIs. Decoupling data stores also

improves the resiliency of your overall application, and ensures that a single database can't be a

single point of failure.

In the following illustration, different AWS databases are used by the “Sales,” “Customer,” and

“Compliance” microservices. These microservices are deployed as AWS Lambda functions and

accessed through an Amazon API Gateway API. AWS Identity and Access Management (IAM)

policies ensure that data is kept private and not shared among the microservices. Each microservice

uses a database type that meets its individual requirements; for example, "Sales" uses Amazon

Aurora, "Customer" uses Amazon DynamoDB, and "Compliance" uses Amazon Relational Database

Service (Amazon RDS) for SQL Server.

Database-per-service pattern 4

AWS Prescriptive Guidance Enabling data persistence in microservices

You should consider using this pattern if:

• Loose coupling is required between your microservices.

• Microservices have different compliance or security requirements for their databases.

• More granular control of scaling is required.

There are the following disadvantages to using the database-per-service pattern:

• It might be challenging to implement complex transactions and queries that span multiple

microservices or data stores.

• You have to manage multiple relational and non-relational databases.

• Your data stores must meet two of the CAP theorem requirements: consistency, availability, or

partition tolerance.

Database-per-service pattern 5

AWS Prescriptive Guidance Enabling data persistence in microservices

Note

If you use the database-per-service pattern, you must deploy the API composition pattern

or the CQRS pattern to implement queries that span multiple microservices.

API composition pattern

This pattern uses an API composer, or aggregator, to implement a query by invoking individual

microservices that own the data. It then combines the results by performing an in-memory join.

The following diagram illustrates how this pattern is implemented.

API composition pattern 6

AWS Prescriptive Guidance Enabling data persistence in microservices

The diagram shows the following workflow:

1. An API gateway serves the "/customer" API, which has an "Orders" microservice that tracks

customer orders in an Aurora database.

2. The "Support" microservice tracks customer support issues and stores them in an Amazon

OpenSearch Service database.

API composition pattern 7

AWS Prescriptive Guidance Enabling data persistence in microservices

3. The "CustomerDetails" microservice maintains customer attributes (for example, address, phone

number, or payment details) in a DynamoDB table.

4. The “GetCustomer” Lambda function runs the APIs for these microservices, and performs an in-

memory join on the data before returning it to the requester. This helps easily retrieve customer

information in one network call to the user-facing API, and keeps the interface very simple.

The API composition pattern offers the simplest way to gather data from multiple microservices.

However, there are the following disadvantages to using the API composition pattern:

• It might not be suitable for complex queries and large datasets that require in-memory joins.

• Your overall system becomes less available if you increase the number of microservices

connected to the API composer.

• Increased database requests create more network traffic, which increases your operational costs.

CQRS pattern

The command query responsibility segregation (CQRS) pattern separates the data mutation, or the

command part of a system, from the query part. You can use the CQRS pattern to separate updates

and queries if they have different requirements for throughput, latency, or consistency. The CQRS

pattern splits the application into two parts—the command side and the query side—as shown in

the following diagram. The command side handles create, update, and delete requests. The

query side runs the query part by using the read replicas.

CQRS pattern 8

AWS Prescriptive Guidance Enabling data persistence in microservices

The diagram shows the following process:

1. The business interacts with the application by sending commands through an API. Commands

are actions such as creating, updating or deleting data.

2. The application processes the incoming command on the command side. This involves

validating, authorizing, and running the operation.

3. The application persists the command’s data in the write (command) database.

4. After the command is stored in the write database, events are triggered to update the data in

the read (query) database.

5. The read (query) database processes and persists the data. Read databases are designed to be

optimized for specific query requirements.

6. The business interacts with read APIs to send queries to the query side of the application.

7. The application processes the incoming query on the query side and retrieves the data from the

read database.

You can implement the CQRS pattern by using various combinations of databases, including:

• Using relational database management system (RDBMS) databases for both the command and

the query side. Write operations go to the primary database and read operations can be routed

to read replicas. Example: Amazon RDS read replicas

CQRS pattern 9

AWS Prescriptive Guidance Enabling data persistence in microservices

• Using an RDBMS database for the command side and a NoSQL database for the query side.

Example: Modernize legacy databases using event sourcing and CQRS with AWS DMS

• Using NoSQL databases for both the command and the query side. Example: Build a CQRS event

store with Amazon DynamoDB

• Using a NoSQL database for the command side and an RDBMS database for the query side, as

discussed in the following example.

In the following illustration, a NoSQL data store, such as DynamoDB, is used to optimize the

write throughput and provide flexible query capabilities. This achieves high write scalability on

workloads that have well-defined access patterns when you add data. A relational database, such

as Amazon Aurora, provides complex query functionality. A DynamoDB stream sends data to a

Lambda function that updates the Aurora table.

Implementing the CQRS pattern with DynamoDB and Aurora provides these key benefits:

• DynamoDB is a fully managed NoSQL database that can handle high-volume write operations,

and Aurora offers high read scalability for complex queries on the query side.

CQRS pattern 10

AWS Prescriptive Guidance Enabling data persistence in microservices

• DynamoDB provides low-latency, high-throughput access to data, which makes it ideal for

handling command and update operations, and Aurora performance can be fine-tuned and

optimized for complex queries.

• Both DynamoDB and Aurora offer serverless options, which enables your business to pay for

resources based on usage only.

• DynamoDB and Aurora are fully managed services, which reduces the operational burden of

managing databases, backups and scalability.

You should consider using the CQRS pattern if:

• You implemented the database-per-service pattern and want to join data from multiple

microservices.

• Your read and write workloads have separate requirements for scaling, latency, and consistency.

• Eventual consistency is acceptable for the read queries.

Important

The CQRS pattern typically results in eventual consistency between the data stores.

Event sourcing pattern

The event sourcing pattern is typically used with the CQRS pattern to decouple read from write

workloads, and optimize for performance, scalability, and security. Data is stored as a series of

events, instead of direct updates to data stores. Microservices replay events from an event store

to compute the appropriate state of their own data stores. The pattern provides visibility for

the current state of the application and additional context for how the application arrived at

that state. The event sourcing pattern works effectively with the CQRS pattern because data can

be reproduced for a specific event, even if the command and query data stores have different

schemas.

By choosing this pattern, you can identify and reconstruct the application’s state for any point in

time. This produces a persistent audit trail and makes debugging easier. However, data becomes

eventually consistent and this might not be appropriate for some use cases.

Event sourcing pattern 11

AWS Prescriptive Guidance Enabling data persistence in microservices

This pattern can be implemented by using either Amazon Kinesis Data Streams or Amazon

EventBridge.

Amazon Kinesis Data Streams implementation

In the following illustration, Kinesis Data Streams is the main component of a centralized event

store. The event store captures application changes as events and persists them on Amazon Simple

Storage Service (Amazon S3).

The workflow consists of the following steps:

1. When the "/withdraw" or "/credit" microservices experience an event state change, they publish

an event by writing a message into Kinesis Data Streams.

2. Other microservices, such as "/balance" or "/creditLimit," read a copy of the message, filter it for

relevance, and forward it for further processing.

Amazon Kinesis Data Streams implementation 12

AWS Prescriptive Guidance Enabling data persistence in microservices

Amazon EventBridge implementation

The architecture in the following illustration uses EventBridge. EventBridge is a serverless service

that uses events to connect application components, which makes it easier for you to build

scalable, event-driven applications. Event-driven architecture is a style of building loosely coupled

software systems that work together by emitting and responding to events. EventBridge provides a

default event bus for events that are published by AWS services, and you can also create a custom

event bus for domain-specific buses.

The workflow consists of the following steps:

1. "OrderPlaced" events are published by the "Orders" microservice to the custom event bus.

2. Microservices that need to take action after an order is placed, such as the "/route" microservice,

are initiated by rules and targets.

3. These microservices generate a route to ship the order to the customer and emit a

"RouteCreated" event.

4. Microservices that need to take further action are also initiated by the "RouteCreated" event.

Amazon EventBridge implementation 13

AWS Prescriptive Guidance Enabling data persistence in microservices

5. Events are sent to an event archive (for example, EventBridge archive) so that they can be

replayed for reprocessing, if required.

6. Historical order events are sent to a new Amazon SQS queue (replay queue) for reprocessing, if

required.

7. If targets are not initiated, the affected events are placed in a dead letter queue (DLQ) for

further analysis and reprocessing.

You should consider using this pattern if:

• Events are used to completely rebuild the application's state.

• You require events to be replayed in the system and that an application's state can be

determined at any point in time.

• You want to be able to reverse specific events without having to start with a blank application

state.

• Your system requires a stream of events that can easily be serialized to create an automated log.

• Your system requires heavy read operations but is light on write operations; heavy read

operations can be directed to an in-memory database, which is kept updated with the events

stream.

Important

If you use the event sourcing pattern, you must deploy the Saga pattern to maintain data

consistency across microservices.

Saga pattern

The saga pattern is a failure management pattern that helps establish consistency in distributed

applications, and coordinates transactions between multiple microservices to maintain data

consistency. A microservice publishes an event for every transaction, and the next transaction is

initiated based on the event's outcome. It can take two different paths, depending on the success

or failure of the transactions.

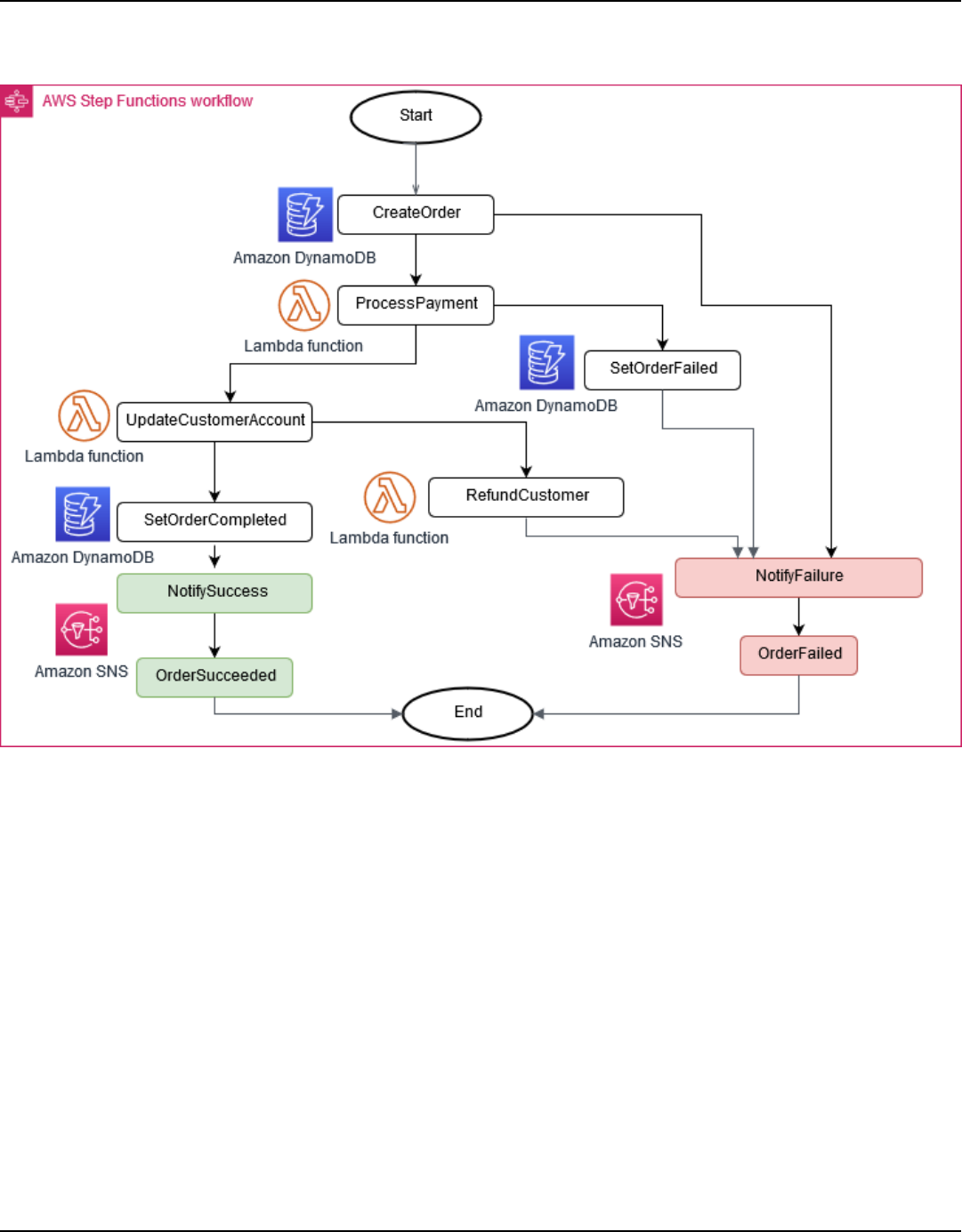

The following illustration shows how the saga pattern implements an order processing system

by using AWS Step Functions. Each step (for example, “ProcessPayment”) also has separate

Saga pattern 14

AWS Prescriptive Guidance Enabling data persistence in microservices

steps to handle the success (for example, "UpdateCustomerAccount") or failure (for example,

"SetOrderFailure") of the process.

You should consider using this pattern if:

• The application needs to maintain data consistency across multiple microservices without tight

coupling.

• There are long-lived transactions and you don’t want other microservices to be blocked if one

microservice runs for a long time.

• You need to be able to roll back if an operation fails in the sequence.

Saga pattern 15

AWS Prescriptive Guidance Enabling data persistence in microservices

Important

The saga pattern is difficult to debug and its complexity increases with the number of

microservices. The pattern requires a complex programming model that develops and

designs compensating transactions for rolling back and undoing changes.

For more information about implementing the saga pattern in a microservices architecture, see

the pattern Implement the serverless saga pattern by using AWS Step Functions on the AWS

Prescriptive Guidance website.

Shared-database-per-service pattern

In the shared-database-per-service pattern, the same database is shared by several microservices.

You need to carefully assess the application architecture before adopting this pattern, and make

sure that you avoid hot tables (single tables that are shared among multiple microservices). All

your database changes must also be backward-compatible; for example, developers can drop

columns or tables only if objects are not referenced by the current and previous versions of all

microservices.

In the following illustration, an insurance database is shared by all the microservices and an IAM

policy provides access to the database. This creates development time coupling; for example,

a change in the "Sales" microservice needs to coordinate schema changes with the "Customer"

microservice. This pattern does not reduce dependencies between development teams, and

introduces runtime coupling because all microservices share the same database. For example,

long-running "Sales" transactions can lock the "Customer" table and this blocks the "Customer"

transactions.

Shared-database-per-service pattern 16

AWS Prescriptive Guidance Enabling data persistence in microservices

You should consider using this pattern if:

• You don't want too much refactoring of your existing code base.

• You enforce data consistency by using transactions that provide atomicity, consistency, isolation,

and durability (ACID).

• You want to maintain and operate only one database.

• Implementing the database-per-service pattern is difficult because of interdependencies among

your existing microservices.

• You don’t want to completely redesign your existing data layer.

Shared-database-per-service pattern 17

AWS Prescriptive Guidance Enabling data persistence in microservices

FAQ

This section provides answers to commonly raised questions about enabling data persistence in

microservices.

When can I modernize my monolithic database as part of my

modernization journey?

You should focus on modernizing your monolithic database when you begin to decompose

monolithic applications into microservices. Make sure that you create a strategy to split your

database into multiple small databases that are aligned with your applications.

Can I keep a legacy monolithic database for multiple

microservices?

Keeping a shared monolithic database for multiple microservices creates tight coupling, which

means you can't independently deploy changes to your microservices, and that all schema

changes must be coordinated among your microservices. Although you can use a relational data

store as your monolithic database, NoSQL databases might be a better choice for some of your

microservices.

What should I consider when designing databases for a

microservices architecture?

You should design your application based on domains that align with your application’s

functionality. Make sure that you evaluate the application’s functionality and decide if it requires

a relational database schema. You should also consider using a NoSQL database, if it fits your

requirements.

What is a common pattern for maintaining data consistency

across different microservices?

The most common pattern is using an event-driven architecture.

When can I modernize my monolithic database as part of my modernization journey? 18

AWS Prescriptive Guidance Enabling data persistence in microservices

How do I maintain transaction automation?

In a microservices architecture, a transaction consists of multiple local transactions handled by

different microservices. If a local transaction fails, you need to roll back the successful transactions

that were previously completed. You can use the Saga pattern to avoid this.

Do I have to use a separate database for each microservice?

The main advantage of a microservices architecture is loose coupling. Each microservice’s

persistent data must be kept private and accessible only through a microservice's API. Changes to

the data schema must be carefully evaluated if your microservices share the same database.

How can I keep a microservice’s persistent data private if they

all share a single database?

If your microservices share a relational database, make sure that you have private tables for

each microservice. You can also create individual schemas that are private to the individual

microservices.

How do I maintain transaction automation? 19

AWS Prescriptive Guidance Enabling data persistence in microservices

Resources

Related guides and patterns

• Strategy for modernizing applications in the AWS Cloud

• Phased approach to modernizing applications in the AWS Cloud

• Evaluating modernization readiness for applications in the AWS Cloud

• Decomposing monoliths into microservices

• Integrating microservices by using AWS serverless services

• Implement the serverless saga pattern by using AWS Step Functions

Other resources

• Application modernization with AWS

• Build highly available microservices to power applications of any size and scale

• Cloud-native application modernization with AWS

• Cost optimization and innovation: An introduction to application modernization

• Developer guide: Scale with microservices

• Distributed data management – Saga Pattern

• Implementing microservice architectures using AWS services: Command query responsibility

segregation pattern

• Implementing microservice architectures using AWS services: Event sourcing pattern

• Modern applications: Creating value through application design

• Modernize your applications, drive growth and reduce TCO

Related guides and patterns 20

AWS Prescriptive Guidance Enabling data persistence in microservices

Document history

The following table describes significant changes to this guide. If you want to be notified about

future updates, you can subscribe to an RSS feed.

Change Description Date

Updated pattern We updated the Amazon

EventBridge implementation

section of the event sourcing

pattern.

December 4, 2023

Expanded section We updated the CQRS pattern

with more information.

November 17, 2023

Added a link for implement

ing the saga pattern with

Step Functions

We updated the Home

and Saga pattern sections

with the link to the pattern

Implement the serverless

saga pattern by using AWS

Step Functions from the AWS

Prescriptive Guidance website.

February 23, 2021

Initial publication — January 27, 2021

21

AWS Prescriptive Guidance Enabling data persistence in microservices

AWS Prescriptive Guidance glossary

The following are commonly used terms in strategies, guides, and patterns provided by AWS

Prescriptive Guidance. To suggest entries, please use the Provide feedback link at the end of the

glossary.

Numbers

7 Rs

Seven common migration strategies for moving applications to the cloud. These strategies build

upon the 5 Rs that Gartner identified in 2011 and consist of the following:

• Refactor/re-architect – Move an application and modify its architecture by taking full

advantage of cloud-native features to improve agility, performance, and scalability. This

typically involves porting the operating system and database. Example:Migrate your on-

premises Oracle database to the Amazon Aurora PostgreSQL-Compatible Edition.

• Replatform (lift and reshape) – Move an application to the cloud, and introduce some level

of optimization to take advantage of cloud capabilities. Example:Migrate your on-premises

Oracle database to Amazon Relational Database Service (Amazon RDS) for Oracle in the AWS

Cloud.

• Repurchase (drop and shop) – Switch to a different product, typically by moving from

a traditional license to a SaaS model. Example:Migrate your customer relationship

management (CRM) system to Salesforce.com.

• Rehost (lift and shift) – Move an application to the cloud without making any changes to

take advantage of cloud capabilities. Example:Migrate your on-premises Oracle database to

Oracle on an EC2 instance in the AWS Cloud.

• Relocate (hypervisor-level lift and shift) – Move infrastructure to the cloud without

purchasing new hardware, rewriting applications, or modifying your existing operations.

You migrate servers from an on-premises platform to a cloud service for the same platform.

Example:Migrate a Microsoft Hyper-V application to AWS.

• Retain (revisit) – Keep applications in your source environment. These might include

applications that require major refactoring, and you want to postpone that work until a later

time, and legacy applications that you want to retain, because there’s no business justification

for migrating them.

# 22

AWS Prescriptive Guidance Enabling data persistence in microservices

• Retire – Decommission or remove applications that are no longer needed in your source

environment.

A

ABAC

See attribute-based access control.

abstracted services

See managed services.

ACID

See atomicity, consistency, isolation, durability.

active-active migration

A database migration method in which the source and target databases are kept in sync (by

using a bidirectional replication tool or dual write operations), and both databases handle

transactions from connecting applications during migration. This method supports migration in

small, controlled batches instead of requiring a one-time cutover. It’s more flexible but requires

more work than active-passive migration.

active-passive migration

A database migration method in which in which the source and target databases are kept in

sync, but only the source database handles transactions from connecting applications while

data is replicated to the target database. The target database doesn’t accept any transactions

during migration.

aggregate function

A SQL function that operates on a group of rows and calculates a single return value for the

group. Examples of aggregate functions include SUM and MAX.

AI

See artificial intelligence.

AIOps

See artificial intelligence operations.

A 23

AWS Prescriptive Guidance Enabling data persistence in microservices

anonymization

The process of permanently deleting personal information in a dataset. Anonymization can help

protect personal privacy. Anonymized data is no longer considered to be personal data.

anti-pattern

A frequently used solution for a recurring issue where the solution is counter-productive,

ineffective, or less effective than an alternative.

application control

A security approach that allows the use of only approved applications in order to help protect a

system from malware.

application portfolio

A collection of detailed information about each application used by an organization, including

the cost to build and maintain the application, and its business value. This information is key to

the portfolio discovery and analysis process and helps identify and prioritize the applications to

be migrated, modernized, and optimized.

artificial intelligence (AI)

The field of computer science that is dedicated to using computing technologies to perform

cognitive functions that are typically associated with humans, such as learning, solving

problems, and recognizing patterns. For more information, see What is Artificial Intelligence?

artificial intelligence operations (AIOps)

The process of using machine learning techniques to solve operational problems, reduce

operational incidents and human intervention, and increase service quality. For more

information about how AIOps is used in the AWS migration strategy, see the operations

integration guide.

asymmetric encryption

An encryption algorithm that uses a pair of keys, a public key for encryption and a private key

for decryption. You can share the public key because it isn’t used for decryption, but access to

the private key should be highly restricted.

atomicity, consistency, isolation, durability (ACID)

A set of software properties that guarantee the data validity and operational reliability of a

database, even in the case of errors, power failures, or other problems.

A 24

AWS Prescriptive Guidance Enabling data persistence in microservices

attribute-based access control (ABAC)

The practice of creating fine-grained permissions based on user attributes, such as department,

job role, and team name. For more information, see ABAC for AWS in the AWS Identity and

Access Management (IAM) documentation.

authoritative data source

A location where you store the primary version of data, which is considered to be the most

reliable source of information. You can copy data from the authoritative data source to other

locations for the purposes of processing or modifying the data, such as anonymizing, redacting,

or pseudonymizing it.

Availability Zone

A distinct location within an AWS Region that is insulated from failures in other Availability

Zones and provides inexpensive, low-latency network connectivity to other Availability Zones in

the same Region.

AWS Cloud Adoption Framework (AWS CAF)

A framework of guidelines and best practices from AWS to help organizations develop an

efficient and effective plan to move successfully to the cloud. AWS CAF organizes guidance

into six focus areas called perspectives: business, people, governance, platform, security,

and operations. The business, people, and governance perspectives focus on business skills

and processes; the platform, security, and operations perspectives focus on technical skills

and processes. For example, the people perspective targets stakeholders who handle human

resources (HR), staffing functions, and people management. For this perspective, AWS CAF

provides guidance for people development, training, and communications to help ready the

organization for successful cloud adoption. For more information, see the AWS CAF website and

the AWS CAF whitepaper.

AWS Workload Qualification Framework (AWS WQF)

A tool that evaluates database migration workloads, recommends migration strategies, and

provides work estimates. AWS WQF is included with AWS Schema Conversion Tool (AWS SCT). It

analyzes database schemas and code objects, application code, dependencies, and performance

characteristics, and provides assessment reports.

A 25

AWS Prescriptive Guidance Enabling data persistence in microservices

B

bad bot

A bot that is intended to disrupt or cause harm to individuals or organizations.

BCP

See business continuity planning.

behavior graph

A unified, interactive view of resource behavior and interactions over time. You can use a

behavior graph with Amazon Detective to examine failed logon attempts, suspicious API

calls, and similar actions. For more information, see Data in a behavior graph in the Detective

documentation.

big-endian system

A system that stores the most significant byte first. See also endianness.

binary classification

A process that predicts a binary outcome (one of two possible classes). For example, your ML

model might need to predict problems such as “Is this email spam or not spam?" or "Is this

product a book or a car?"

bloom filter

A probabilistic, memory-efficient data structure that is used to test whether an element is a

member of a set.

blue/green deployment

A deployment strategy where you create two separate but identical environments. You run the

current application version in one environment (blue) and the new application version in the

other environment (green). This strategy helps you quickly roll back with minimal impact.

bot

A software application that runs automated tasks over the internet and simulates human

activity or interaction. Some bots are useful or beneficial, such as web crawlers that index

information on the internet. Some other bots, known as bad bots, are intended to disrupt or

cause harm to individuals or organizations.

B 26

AWS Prescriptive Guidance Enabling data persistence in microservices

botnet

Networks of bots that are infected by malware and are under the control of a single party,

known as a bot herder or bot operator. Botnets are the best-known mechanism to scale bots and

their impact.

branch

A contained area of a code repository. The first branch created in a repository is the main

branch. You can create a new branch from an existing branch, and you can then develop

features or fix bugs in the new branch. A branch you create to build a feature is commonly

referred to as a feature branch. When the feature is ready for release, you merge the feature

branch back into the main branch. For more information, see About branches (GitHub

documentation).

break-glass access

In exceptional circumstances and through an approved process, a quick means for a user to

gain access to an AWS account that they don't typically have permissions to access. For more

information, see the Implement break-glass procedures indicator in the AWS Well-Architected

guidance.

brownfield strategy

The existing infrastructure in your environment. When adopting a brownfield strategy for a

system architecture, you design the architecture around the constraints of the current systems

and infrastructure. If you are expanding the existing infrastructure, you might blend brownfield

and greenfield strategies.

buffer cache

The memory area where the most frequently accessed data is stored.

business capability

What a business does to generate value (for example, sales, customer service, or marketing).

Microservices architectures and development decisions can be driven by business capabilities.

For more information, see the Organized around business capabilities section of the Running

containerized microservices on AWS whitepaper.

business continuity planning (BCP)

A plan that addresses the potential impact of a disruptive event, such as a large-scale migration,

on operations and enables a business to resume operations quickly.

B 27

AWS Prescriptive Guidance Enabling data persistence in microservices

C

CAF

See AWS Cloud Adoption Framework.

canary deployment

The slow and incremental release of a version to end users. When you are confident, you deploy

the new version and replace the current version in its entirety.

CCoE

See Cloud Center of Excellence.

CDC

See change data capture.

change data capture (CDC)

The process of tracking changes to a data source, such as a database table, and recording

metadata about the change. You can use CDC for various purposes, such as auditing or

replicating changes in a target system to maintain synchronization.

chaos engineering

Intentionally introducing failures or disruptive events to test a system’s resilience. You can use

AWS Fault Injection Service (AWS FIS) to perform experiments that stress your AWS workloads

and evaluate their response.

CI/CD

See continuous integration and continuous delivery.

classification

A categorization process that helps generate predictions. ML models for classification problems

predict a discrete value. Discrete values are always distinct from one another. For example, a

model might need to evaluate whether or not there is a car in an image.

client-side encryption

Encryption of data locally, before the target AWS service receives it.

C 28

AWS Prescriptive Guidance Enabling data persistence in microservices

Cloud Center of Excellence (CCoE)

A multi-disciplinary team that drives cloud adoption efforts across an organization, including

developing cloud best practices, mobilizing resources, establishing migration timelines, and

leading the organization through large-scale transformations. For more information, see the

CCoE posts on the AWS Cloud Enterprise Strategy Blog.

cloud computing

The cloud technology that is typically used for remote data storage and IoT device

management. Cloud computing is commonly connected to edge computing technology.

cloud operating model

In an IT organization, the operating model that is used to build, mature, and optimize one or

more cloud environments. For more information, see Building your Cloud Operating Model.

cloud stages of adoption

The four phases that organizations typically go through when they migrate to the AWS Cloud:

• Project – Running a few cloud-related projects for proof of concept and learning purposes

• Foundation – Making foundational investments to scale your cloud adoption (e.g., creating a

landing zone, defining a CCoE, establishing an operations model)

• Migration – Migrating individual applications

• Re-invention – Optimizing products and services, and innovating in the cloud

These stages were defined by Stephen Orban in the blog post The Journey Toward Cloud-First

& the Stages of Adoption on the AWS Cloud Enterprise Strategy blog. For information about

how they relate to the AWS migration strategy, see the migration readiness guide.

CMDB

See configuration management database.

code repository

A location where source code and other assets, such as documentation, samples, and scripts,

are stored and updated through version control processes. Common cloud repositories include

GitHub or AWS CodeCommit. Each version of the code is called a branch. In a microservice

structure, each repository is devoted to a single piece of functionality. A single CI/CD pipeline

can use multiple repositories.

C 29

AWS Prescriptive Guidance Enabling data persistence in microservices

cold cache

A buffer cache that is empty, not well populated, or contains stale or irrelevant data. This

affects performance because the database instance must read from the main memory or disk,

which is slower than reading from the buffer cache.

cold data

Data that is rarely accessed and is typically historical. When querying this kind of data, slow

queries are typically acceptable. Moving this data to lower-performing and less expensive

storage tiers or classes can reduce costs.

computer vision (CV)

A field of AI that uses machine learning to analyze and extract information from visual formats

such as digital images and videos. For example, AWS Panorama offers devices that add CV to

on-premises camera networks, and Amazon SageMaker provides image processing algorithms

for CV.

configuration drift

For a workload, a configuration change from the expected state. It might cause the workload to

become noncompliant, and it's typically gradual and unintentional.

configuration management database (CMDB)

A repository that stores and manages information about a database and its IT environment,

including both hardware and software components and their configurations. You typically use

data from a CMDB in the portfolio discovery and analysis stage of migration.

conformance pack

A collection of AWS Config rules and remediation actions that you can assemble to customize

your compliance and security checks. You can deploy a conformance pack as a single entity in

an AWS account and Region, or across an organization, by using a YAML template. For more

information, see Conformance packs in the AWS Config documentation.

continuous integration and continuous delivery (CI/CD)

The process of automating the source, build, test, staging, and production stages of the

software release process. CI/CD is commonly described as a pipeline. CI/CD can help you

automate processes, improve productivity, improve code quality, and deliver faster. For more

information, see Benefits of continuous delivery. CD can also stand for continuous deployment.

For more information, see Continuous Delivery vs. Continuous Deployment.

C 30

AWS Prescriptive Guidance Enabling data persistence in microservices

CV

See computer vision.

D

data at rest

Data that is stationary in your network, such as data that is in storage.

data classification

A process for identifying and categorizing the data in your network based on its criticality and

sensitivity. It is a critical component of any cybersecurity risk management strategy because

it helps you determine the appropriate protection and retention controls for the data. Data

classification is a component of the security pillar in the AWS Well-Architected Framework. For

more information, see Data classification.

data drift

A meaningful variation between the production data and the data that was used to train an ML

model, or a meaningful change in the input data over time. Data drift can reduce the overall

quality, accuracy, and fairness in ML model predictions.

data in transit

Data that is actively moving through your network, such as between network resources.

data mesh

An architectural framework that provides distributed, decentralized data ownership with

centralized management and governance.

data minimization

The principle of collecting and processing only the data that is strictly necessary. Practicing

data minimization in the AWS Cloud can reduce privacy risks, costs, and your analytics carbon

footprint.

data perimeter

A set of preventive guardrails in your AWS environment that help make sure that only trusted

identities are accessing trusted resources from expected networks. For more information, see

Building a data perimeter on AWS.

D 31

AWS Prescriptive Guidance Enabling data persistence in microservices

data preprocessing

To transform raw data into a format that is easily parsed by your ML model. Preprocessing data

can mean removing certain columns or rows and addressing missing, inconsistent, or duplicate

values.

data provenance

The process of tracking the origin and history of data throughout its lifecycle, such as how the

data was generated, transmitted, and stored.

data subject

An individual whose data is being collected and processed.

data warehouse

A data management system that supports business intelligence, such as analytics. Data

warehouses commonly contain large amounts of historical data, and they are typically used for

queries and analysis.

database definition language (DDL)

Statements or commands for creating or modifying the structure of tables and objects in a

database.

database manipulation language (DML)

Statements or commands for modifying (inserting, updating, and deleting) information in a

database.

DDL

See database definition language.

deep ensemble

To combine multiple deep learning models for prediction. You can use deep ensembles to

obtain a more accurate prediction or for estimating uncertainty in predictions.

deep learning

An ML subfield that uses multiple layers of artificial neural networks to identify mapping

between input data and target variables of interest.

D 32

AWS Prescriptive Guidance Enabling data persistence in microservices

defense-in-depth

An information security approach in which a series of security mechanisms and controls are

thoughtfully layered throughout a computer network to protect the confidentiality, integrity,

and availability of the network and the data within. When you adopt this strategy on AWS,

you add multiple controls at different layers of the AWS Organizations structure to help

secure resources. For example, a defense-in-depth approach might combine multi-factor

authentication, network segmentation, and encryption.

delegated administrator

In AWS Organizations, a compatible service can register an AWS member account to administer

the organization’s accounts and manage permissions for that service. This account is called the

delegated administrator for that service. For more information and a list of compatible services,

see Services that work with AWS Organizations in the AWS Organizations documentation.

deployment

The process of making an application, new features, or code fixes available in the target

environment. Deployment involves implementing changes in a code base and then building and

running that code base in the application’s environments.

development environment

See environment.

detective control

A security control that is designed to detect, log, and alert after an event has occurred.

These controls are a second line of defense, alerting you to security events that bypassed the

preventative controls in place. For more information, see Detective controls in Implementing

security controls on AWS.

development value stream mapping (DVSM)

A process used to identify and prioritize constraints that adversely affect speed and quality in

a software development lifecycle. DVSM extends the value stream mapping process originally

designed for lean manufacturing practices. It focuses on the steps and teams required to create

and move value through the software development process.

digital twin

A virtual representation of a real-world system, such as a building, factory, industrial

equipment, or production line. Digital twins support predictive maintenance, remote

monitoring, and production optimization.

D 33

AWS Prescriptive Guidance Enabling data persistence in microservices

dimension table

In a star schema, a smaller table that contains data attributes about quantitative data in a

fact table. Dimension table attributes are typically text fields or discrete numbers that behave

like text. These attributes are commonly used for query constraining, filtering, and result set

labeling.

disaster

An event that prevents a workload or system from fulfilling its business objectives in its primary

deployed location. These events can be natural disasters, technical failures, or the result of

human actions, such as unintentional misconfiguration or a malware attack.

disaster recovery (DR)

The strategy and process you use to minimize downtime and data loss caused by a disaster. For

more information, see Disaster Recovery of Workloads on AWS: Recovery in the Cloud in the

AWS Well-Architected Framework.

DML

See database manipulation language.

domain-driven design

An approach to developing a complex software system by connecting its components to

evolving domains, or core business goals, that each component serves. This concept was

introduced by Eric Evans in his book, Domain-Driven Design: Tackling Complexity in the Heart of

Software (Boston: Addison-Wesley Professional,2003). For information about how you can use

domain-driven design with the strangler fig pattern, see Modernizing legacy Microsoft ASP.NET

(ASMX) web services incrementally by using containers and Amazon API Gateway.

DR

See disaster recovery.

drift detection

Tracking deviations from a baselined configuration. For example, you can use AWS

CloudFormation to detect drift in system resources, or you can use AWS Control Tower to detect

changes in your landing zone that might affect compliance with governance requirements.

DVSM

See development value stream mapping.

D 34

AWS Prescriptive Guidance Enabling data persistence in microservices

E

EDA

See exploratory data analysis.

edge computing

The technology that increases the computing power for smart devices at the edges of an IoT

network. When compared with cloud computing, edge computing can reduce communication

latency and improve response time.

encryption

A computing process that transforms plaintext data, which is human-readable, into ciphertext.

encryption key

A cryptographic string of randomized bits that is generated by an encryption algorithm. Keys

can vary in length, and each key is designed to be unpredictable and unique.

endianness

The order in which bytes are stored in computer memory. Big-endian systems store the most

significant byte first. Little-endian systems store the least significant byte first.

endpoint

See service endpoint.

endpoint service

A service that you can host in a virtual private cloud (VPC) to share with other users. You can

create an endpoint service with AWS PrivateLink and grant permissions to other AWS accounts

or to AWS Identity and Access Management (IAM) principals. These accounts or principals

can connect to your endpoint service privately by creating interface VPC endpoints. For more

information, see Create an endpoint service in the Amazon Virtual Private Cloud (Amazon VPC)

documentation.

enterprise resource planning (ERP)

A system that automates and manages key business processes (such as accounting, MES, and

project management) for an enterprise.

E 35

AWS Prescriptive Guidance Enabling data persistence in microservices

envelope encryption

The process of encrypting an encryption key with another encryption key. For more

information, see Envelope encryption in the AWS Key Management Service (AWS KMS)

documentation.

environment

An instance of a running application. The following are common types of environments in cloud

computing:

• development environment – An instance of a running application that is available only to the

core team responsible for maintaining the application. Development environments are used

to test changes before promoting them to upper environments. This type of environment is

sometimes referred to as a test environment.

• lower environments – All development environments for an application, such as those used

for initial builds and tests.

• production environment – An instance of a running application that end users can access. In a

CI/CD pipeline, the production environment is the last deployment environment.

• upper environments – All environments that can be accessed by users other than the core

development team. This can include a production environment, preproduction environments,

and environments for user acceptance testing.

epic

In agile methodologies, functional categories that help organize and prioritize your work. Epics

provide a high-level description of requirements and implementation tasks. For example, AWS

CAF security epics include identity and access management, detective controls, infrastructure

security, data protection, and incident response. For more information about epics in the AWS

migration strategy, see the program implementation guide.

ERP

See enterprise resource planning.

exploratory data analysis (EDA)

The process of analyzing a dataset to understand its main characteristics. You collect or

aggregate data and then perform initial investigations to find patterns, detect anomalies,

and check assumptions. EDA is performed by calculating summary statistics and creating data

visualizations.

E 36

AWS Prescriptive Guidance Enabling data persistence in microservices

F

fact table

The central table in a star schema. It stores quantitative data about business operations.

Typically, a fact table contains two types of columns: those that contain measures and those

that contain a foreign key to a dimension table.

fail fast

A philosophy that uses frequent and incremental testing to reduce the development lifecycle. It

is a critical part of an agile approach.

fault isolation boundary

In the AWS Cloud, a boundary such as an Availability Zone, AWS Region, control plane, or data

plane that limits the effect of a failure and helps improve the resilience of workloads. For more

information, see AWS Fault Isolation Boundaries.

feature branch

See branch.

features

The input data that you use to make a prediction. For example, in a manufacturing context,

features could be images that are periodically captured from the manufacturing line.

feature importance

How significant a feature is for a model’s predictions. This is usually expressed as a numerical

score that can be calculated through various techniques, such as Shapley Additive Explanations

(SHAP) and integrated gradients. For more information, see Machine learning model

interpretability with :AWS.

feature transformation

To optimize data for the ML process, including enriching data with additional sources, scaling

values, or extracting multiple sets of information from a single data field. This enables the ML

model to benefit from the data. For example, if you break down the “2021-05-27 00:15:37”

date into “2021”, “May”, “Thu”, and “15”, you can help the learning algorithm learn nuanced

patterns associated with different data components.

FGAC

See fine-grained access control.

F 37

AWS Prescriptive Guidance Enabling data persistence in microservices

fine-grained access control (FGAC)

The use of multiple conditions to allow or deny an access request.

flash-cut migration

A database migration method that uses continuous data replication through change data

capture to migrate data in the shortest time possible, instead of using a phased approach. The

objective is to keep downtime to a minimum.

G

geo blocking

See geographic restrictions.

geographic restrictions (geo blocking)

In Amazon CloudFront, an option to prevent users in specific countries from accessing content

distributions. You can use an allow list or block list to specify approved and banned countries.

For more information, see Restricting the geographic distribution of your content in the

CloudFront documentation.

Gitflow workflow

An approach in which lower and upper environments use different branches in a source code

repository. The Gitflow workflow is considered legacy, and the trunk-based workflow is the

modern, preferred approach.

greenfield strategy

The absence of existing infrastructure in a new environment. When adopting a greenfield

strategy for a system architecture, you can select all new technologies without the restriction

of compatibility with existing infrastructure, also known as brownfield. If you are expanding the

existing infrastructure, you might blend brownfield and greenfield strategies.

guardrail

A high-level rule that helps govern resources, policies, and compliance across organizational

units (OUs). Preventive guardrails enforce policies to ensure alignment to compliance standards.

They are implemented by using service control policies and IAM permissions boundaries.

Detective guardrails detect policy violations and compliance issues, and generate alerts

G 38

AWS Prescriptive Guidance Enabling data persistence in microservices

for remediation. They are implemented by using AWS Config, AWS Security Hub, Amazon

GuardDuty, AWS Trusted Advisor, Amazon Inspector, and custom AWS Lambda checks.

H

HA

See high availability.

heterogeneous database migration

Migrating your source database to a target database that uses a different database engine

(for example, Oracle to Amazon Aurora). Heterogeneous migration is typically part of a re-

architecting effort, and converting the schema can be a complex task. AWS provides AWS SCT

that helps with schema conversions.

high availability (HA)

The ability of a workload to operate continuously, without intervention, in the event of

challenges or disasters. HA systems are designed to automatically fail over, consistently deliver

high-quality performance, and handle different loads and failures with minimal performance

impact.

historian modernization

An approach used to modernize and upgrade operational technology (OT) systems to better

serve the needs of the manufacturing industry. A historian is a type of database that is used to

collect and store data from various sources in a factory.

homogeneous database migration

Migrating your source database to a target database that shares the same database engine

(for example, Microsoft SQL Server to Amazon RDS for SQL Server). Homogeneous migration

is typically part of a rehosting or replatforming effort. You can use native database utilities to

migrate the schema.

hot data

Data that is frequently accessed, such as real-time data or recent translational data. This data

typically requires a high-performance storage tier or class to provide fast query responses.

H 39

AWS Prescriptive Guidance Enabling data persistence in microservices

hotfix

An urgent fix for a critical issue in a production environment. Due to its urgency, a hotfix is

usually made outside of the typical DevOps release workflow.

hypercare period

Immediately following cutover, the period of time when a migration team manages and

monitors the migrated applications in the cloud in order to address any issues. Typically, this

period is 1–4 days in length. At the end of the hypercare period, the migration team typically

transfers responsibility for the applications to the cloud operations team.

I

IaC

See infrastructure as code.

identity-based policy

A policy attached to one or more IAM principals that defines their permissions within the AWS

Cloud environment.

idle application

An application that has an average CPU and memory usage between 5and 20percent over

a period of 90days. In a migration project, it is common to retire these applications or retain

them on premises.

IIoT

See industrial Internet of Things.

immutable infrastructure

A model that deploys new infrastructure for production workloads instead of updating,

patching, or modifying the existing infrastructure. Immutable infrastructures are inherently

more consistent, reliable, and predictable than mutable infrastructure. For more information,

see the Deploy using immutable infrastructure best practice in the AWS Well-Architected

Framework.

inbound (ingress) VPC

In an AWS multi-account architecture, a VPC that accepts, inspects, and routes network

connections from outside an application. The AWS Security Reference Architecture recommends

I 40

AWS Prescriptive Guidance Enabling data persistence in microservices

setting up your Network account with inbound, outbound, and inspection VPCs to protect the

two-way interface between your application and the broader internet.

incremental migration

A cutover strategy in which you migrate your application in small parts instead of performing

a single, full cutover. For example, you might move only a few microservices or users to the

new system initially. After you verify that everything is working properly, you can incrementally

move additional microservices or users until you can decommission your legacy system. This

strategy reduces the risks associated with large migrations.

Industry 4.0

A term that was introduced by Klaus Schwab in 2016 to refer to the modernization of

manufacturing processes through advances in connectivity, real-time data, automation,

analytics, and AI/ML.

infrastructure

All of the resources and assets contained within an application’s environment.

infrastructure as code (IaC)

The process of provisioning and managing an application’s infrastructure through a set

of configuration files. IaC is designed to help you centralize infrastructure management,

standardize resources, and scale quickly so that new environments are repeatable, reliable, and

consistent.

industrial Internet of Things (IIoT)

The use of internet-connected sensors and devices in the industrial sectors, such as

manufacturing, energy, automotive, healthcare, life sciences, and agriculture. For more

information, see Building an industrial Internet of Things (IIoT) digital transformation strategy.

inspection VPC

In an AWS multi-account architecture, a centralized VPC that manages inspections of network

traffic between VPCs (in the same or different AWS Regions), the internet, and on-premises

networks. The AWS Security Reference Architecture recommends setting up your Network

account with inbound, outbound, and inspection VPCs to protect the two-way interface

between your application and the broader internet.

I 41

AWS Prescriptive Guidance Enabling data persistence in microservices

Internet of Things (IoT)

The network of connected physical objects with embedded sensors or processors that

communicate with other devices and systems through the internet or over a local

communication network. For more information, see What is IoT?

interpretability

A characteristic of a machine learning model that describes the degree to which a human

can understand how the model’s predictions depend on its inputs. For more information, see

Machine learning model interpretability with AWS.

IoT

See Internet of Things.

IT information library (ITIL)

A set of best practices for delivering IT services and aligning these services with business

requirements. ITIL provides the foundation for ITSM.

IT service management (ITSM)

Activities associated with designing, implementing, managing, and supporting IT services for

an organization. For information about integrating cloud operations with ITSM tools, see the

operations integration guide.

ITIL

See IT information library.

ITSM

See IT service management.

L

label-based access control (LBAC)

An implementation of mandatory access control (MAC) where the users and the data itself are

each explicitly assigned a security label value. The intersection between the user security label

and data security label determines which rows and columns can be seen by the user.

L 42

AWS Prescriptive Guidance Enabling data persistence in microservices

landing zone

A landing zone is a well-architected, multi-account AWS environment that is scalable and

secure. This is a starting point from which your organizations can quickly launch and deploy

workloads and applications with confidence in their security and infrastructure environment.

For more information about landing zones, see Setting up a secure and scalable multi-account

AWS environment.

large migration

A migration of 300or more servers.

LBAC

See label-based access control.

least privilege

The security best practice of granting the minimum permissions required to perform a task. For

more information, see Apply least-privilege permissions in the IAM documentation.

lift and shift

See 7 Rs.

little-endian system

A system that stores the least significant byte first. See also endianness.

lower environments

See environment.

M