U.S. Department of Health and Human Services ● Centers for Disease Control and Prevention ● National Center for Health Statistics

National Health Interview Survey (NHIS)

Early Release Program

Preliminary Evaluation of the Impact of the

2019 National Health Interview Survey

Questionnaire Redesign and

Weighting Adjustments on

Early Release Program Estimates

Division of Health Interview Statistics

National Center for Health Statistics

Hyattsville, Maryland

Centers for Disease Control and Prevention

U.S. Department of Health and Human Services

Updated September 2020

Preliminary Evaluation of the Impact of 2019 NHIS Questionnaire Redesign and Weighting Adjustments Page | 2

U.S. Department of Health and Human Services ● Centers for Disease Control and Prevention ● National Center for Health Statistics

Table of Contents

Introduction ............................................................................................................................................................... 3

2019 NHIS Questionnaire Redesign ........................................................................................................................... 4

Weighting Adjustments ............................................................................................................................................. 5

NHIS Early Release Program ...................................................................................................................................... 6

Analytic Approach ..................................................................................................................................................... 8

Results ..................................................................................................................................................................... 12

Diagnosed Hypertension ................................................................................................................................... 12

Asthma Episode ................................................................................................................................................ 14

Regularly Experienced Chronic Pain ................................................................................................................. 16

Regularly Had Feelings of Worry, Nervousness, or Anxiety .............................................................................. 18

Regularly Had Feelings of Depression ............................................................................................................... 20

Disability Status ................................................................................................................................................. 22

Current Cigarette Smoking ................................................................................................................................ 24

Current Electronic Cigarette Use ...................................................................................................................... 26

Did Not Get Needed Medical Care Due to Cost ................................................................................................ 28

Did Not Take Medication as Prescribed to Save Money ................................................................................... 30

Did Not Get Needed Mental Health Care Due to Cost ..................................................................................... 32

Doctor Visit ....................................................................................................................................................... 34

Hospital Emergency Department Visit .............................................................................................................. 36

Influenza Vaccination ........................................................................................................................................ 38

Blood Pressure Check ........................................................................................................................................ 40

Health Insurance Coverage ............................................................................................................................... 41

Adults Living in Wireless-Only Households ....................................................................................................... 45

Summary .................................................................................................................................................................. 46

References ............................................................................................................................................................... 48

Suggested citation:

National Center for Health Statistics. Preliminary evaluation of the impact of the 2019 National Health Interview

Survey questionnaire redesign and weighting adjustments on Early Release Program estimates. Hyattsville,

Maryland. September 2020.

Preliminary Evaluation of the Impact of 2019 NHIS Questionnaire Redesign and Weighting Adjustments Page | 3

U.S. Department of Health and Human Services ● Centers for Disease Control and Prevention ● National Center for Health Statistics

Introduction

The National Center for Health Statistics’ (NCHS) National Health Interview Survey (NHIS) provides information

on the health of the civilian noninstitutionalized population of the United States through confidential interviews

conducted in households. NHIS is the nation’s oldest ongoing household-based health survey. Since 1957, it has

provided data for analyzing health trends and tracking progress toward achieving national health objectives.

In 2019, for the first time in over 20 years, the NHIS questionnaire was redesigned to increase relevance,

enhance data quality, and minimize respondent burden. The wording of some questions and answer choices was

revised to improve quality of measurement. Some questions were dropped, and others were added, changing

the context within which some questions were asked. And some questions are now answered directly by the

adult to which they refer, rather than a family member responding on behalf of all family members. Perhaps as a

result of these changes, response rates increased and the characteristics of nonrespondents may have changed,

so a revised and updated method of adjusting sampling weights to account for nonresponse was implemented

concurrent with the questionnaire design changes.

Any differences observed between estimates for 2018 and 2019 may be due to real change in the population or

partly attributable to the 2019 NHIS questionnaire redesign and/or the updated weighting approach. This

working paper examines the effect of methods changes on the comparability of estimates over time, looking

specifically at estimates for the key indicators that are reported as part of the NHIS Early Release Program. It

examines whether the design changes appeared to impact these key estimates by comparing differences across

designs during October-December 2018 when both the prior design and a field test for the redesign were in the

field simultaneously. Moreover, it explores the impact of the updated weighting approach by examining

differences in estimates derived with the two different weighting approaches for the same data set (the

preliminary microdata files for January-December 2019). This working paper is an update to a previous version

of this report that was released on May 28, 2020; this update includes 2019 full-year Early Release estimates.

The potential impact of weighting and design effects will be further evaluated when final data become available.

This working paper is organized in the following manner. It starts with an overview of the questionnaire

redesign and how the structure of the questionnaire differs from the prior questionnaire design. Next, it briefly

describes the changes to the weighting approach. Then, it provides a description of the NHIS Early Release

Program. Following this background information, additional details are provided about the methods used to

examine whether the NHIS questionnaire redesign and updated weighting approach impacted the key estimates

from the Early Release Program. Results are then presented for each indicator and summarized at the end.

Preliminary conclusions are included in the results section regarding whether or not the 2019 NHIS

questionnaire redesign and the updated weighting approach were likely to have had an impact on each

indicator. These conclusions are based on the magnitude of the observed effect. The results of statistical

significance testing are reported and considered, but conclusions are not solely based on this testing.

When an impact of the questionnaire redesign and/or the updated weighting approach is likely, it may not be

appropriate to conclude that any observed difference or lack of difference reflects actual change or no change

over time. The conclusions presented here are preliminary and will be revisited in future NCHS reports. How the

findings of this report should be applied to specific analyses may vary depending on the objectives of that

analysis. The findings are provided to users so that they can make informed decisions about the use of the data.

Preliminary Evaluation of the Impact of 2019 NHIS Questionnaire Redesign and Weighting Adjustments Page | 4

U.S. Department of Health and Human Services ● Centers for Disease Control and Prevention ● National Center for Health Statistics

2019 NHIS Questionnaire Redesign

The content and structure of the NHIS were updated in the redesign to better meet the needs of data users.

Aims of the redesign were to improve the measurement of covered health topics, reduce respondent burden by

shortening the length of the questionnaire, harmonize overlapping content with other federal health surveys,

establish a long-term structure of ongoing and periodic topics, and incorporate advances in survey methodology

and measurement.

One “sample adult” aged 18 years or older and one “sample child” aged 17 years or younger (if any children live

in the household) are randomly selected from each household following a brief initial interview that identifies

everyone who usually lives or stays in the household. Information about the sample adult is collected from the

sample adult herself or himself unless she or he is physically or mentally unable to do so, in which case a

knowledgeable proxy can answer for the sample adult. Information about the sample child is collected from a

parent or adult who is knowledgeable about and responsible for the health care of the sample child. This

respondent may or may not also be the sample adult.

From 1997-2018, the NHIS also included a family questionnaire. Questions from the family questionnaire,

including questions on health insurance and unmet needs for medical care, were answered by a member of the

family on behalf of all members of the family. In the redesigned survey, much of the content that was collected

about the sample adult and sample child in the family section is now collected within the sample adult and

sample child questionnaires, from the sample adult or sample child’s respondent rather than another family

member. Sample adults may give different answers about themselves than would a family member responding

for them, and this could impact survey estimates.

The elimination of the family questionnaire was one part of an effort to shorten the length of the questionnaire.

In addition, questions on rare conditions or service utilization (those with less than a 2% prevalence), questions

on topics that were no longer priorities, and questions that were rarely used in analysis were dropped. A

structure of annual and rotating content was also implemented. The annual core contains the same questions

from year to year, and these questions focus on demographic characteristics, health insurance, chronic

conditions, health care access and use, health-related behaviors, and functioning and disability. The rotating

core consists of questions that will be included in the interview periodically and include expanded content on

mental health, service utilization, preventive services, health-related behaviors, injuries, and chronic pain. The

periodicity of questions in the rotating core varies by question and may be every-other year, once-every-three

years, or two-out-of-three years.

As a result of dropping questions and putting others on a rotating schedule, along with the addition of new

questions reflecting new priorities, the question order and the context of most questions (that is, the questions

that come immediately before) changed with the questionnaire redesign. These changes can affect how

subsequent questions are interpreted and responded to, and these effects could impact survey estimates.

For more details about the NHIS questionnaires, go to:

https://www.cdc.gov/nchs/nhis/data-questionnaires-

documentation.htm.

Preliminary Evaluation of the Impact of 2019 NHIS Questionnaire Redesign and Weighting Adjustments Page | 5

U.S. Department of Health and Human Services ● Centers for Disease Control and Prevention ● National Center for Health Statistics

Weighting Adjustments

NHIS is a sample survey. That is, only a sample (subset) of the civilian noninstitutionalized population is selected

to participate in the survey. Additionally, not everyone selected to participate agrees to participate, which

affects the representativeness of the sample. In order to account for these two factors, sampling weights are

created. These sampling weights are used to produce representative national estimates. The weights reflect

several steps of adjustments starting with a base weight, which is inverse to the probability of selection.

Households or persons that are more likely to be selected are given lower weights so that the final estimates are

not biased by their increased likelihood of being selected.

These base weights are then adjusted for nonresponse patterns, or the different response rates among different

household and person-level subgroups. The 2019 questionnaire redesign provided an opportunity to evaluate

the adjustment approach that had been in place since 1997. At that time and continuing through 2018, the

adjustment approach was based on geography; the weights for households and persons in geographic areas

with lower response rates were increased more than for those in areas with higher response rates. That way,

final estimates were not biased by the latter group’s increased likelihood of participation. More sophisticated

methods are now available (1, 2), and based on the evaluation, the weighting process for 2019 was updated. The

updated approach for nonresponse adjustment uses multilevel regression models that consider numerous

variables that are predictive of both survey response and selected key health outcomes, the key criteria for

effective bias reduction.

Finally, the nonresponse adjusted weights are calibrated to U.S. Census Bureau population projections and

American Community Survey (ACS) one-year estimates for age, sex, race and ethnicity, educational attainment,

Census division, and Metropolitan Statistical Area status. Prior to 2019, calibration was only to age, sex, and race

and ethnicity population projections. These changes to the nonresponse adjustment approach and the

calibration methods have the potential to impact the weighted survey estimates.

Preliminary Evaluation of the Impact of 2019 NHIS Questionnaire Redesign and Weighting Adjustments Page | 6

U.S. Department of Health and Human Services ● Centers for Disease Control and Prevention ● National Center for Health Statistics

NHIS Early Release Program

Since its inception in 2001, the NHIS Early Release Program provides the public with estimates, analytic reports,

and preliminary microdata files on an expedited schedule. NHIS data users can have access to these timely

estimates, reports, and preliminary microdata files on selected key health indicators without having to wait for

the release of the final annual NCHS microdata files. Table 1 summarizes the 19 indicators that were included in

the Early Release Program prior to the 2019 NHIS questionnaire redesign and the calendar year that they were

added to the Early Release Program.

Table 1. Key indicators included in the NHIS Early Release Program prior to

the 2019 questionnaire redesign, by topic subgroup and calendar year

introduced to the Early Release Program

Indicators Calendar Year

Health Status

General health status 2001

Needing help with personal care from other persons 2002

Obesity 2001

Serious psychological distress 2003

Diagnosed diabetes 2003

Current asthma 2004

Asthma episode 2003

Health Behaviors

Excessive alcohol consumption 2001

Current smoking 2001

Leisure-time physical activity 2001

Health Care Access

Usual source of medical care 2001

Unable to obtain needed medical care due to cost 2001

Health Care Service Utilization

HIV testing 2001

Influenza vaccination 2001

Pneumococcal vaccination 2001

Health Insurance Coverage

Lack of health insurance 2001

Public health plan coverage 2002

Private health insurance coverage 2002

Telephone Ownership

Adults living in wireless-only households 2007

These estimates were released as a series of three products. On a quarterly basis, cumulative preliminary

estimates of health insurance coverage and selected key health indicators were published. On a bi-annual basis,

six-month estimates on the prevalence of adults living in households with only wireless telephone service were

published.

As part of the 2019 NHIS questionnaire redesign, the Early Release Program content was reviewed. With input

from the NCHS Board of Scientific Counselors and NCHS leadership, 18 key health indicators were selected from

the redesigned questionnaire to include in the Early Release Program in addition to the established content on

health insurance coverage and telephone ownership. Table 2 summarizes these 22 indicators and the calendar

year that they were added to the Early Release Program.

Preliminary Evaluation of the Impact of 2019 NHIS Questionnaire Redesign and Weighting Adjustments Page | 7

U.S. Department of Health and Human Services ● Centers for Disease Control and Prevention ● National Center for Health Statistics

Table 2. Key indicators included in the redesigned NHIS Early Release Program, by topic

subgroup and calendar year introduced to the Early Release Program

Indicators Calendar Year

Health Status

Diagnosed hypertension in the past 12 months 2019

Asthma episode in the past 12 months 2003

Six or more workdays missed due to illness, injury or disability in the past 12 months 2019

Regularly experienced chronic pain 2019

Regularly had feelings of worry, nervousness, or anxiety 2019

Regularly had feelings of depression 2019

Disability status 2019

Health Behaviors

Current cigarette smoking 2001

Current electronic cigarette use 2019

Health Care Access

Did not get needed medical care due to cost in the past 12 months 2001

Did not take medication as prescribed to save money in the past 12 months 2019

Did not get needed mental health care due to cost in the past 12 months 2019

Health Care Service Utilization

Doctor visit in the past 12 months 2019

Hospital emergency department visit in the past 12 months 2019

Receipt of influenza vaccination in the past 12 months 2001

Blood pressure check in the past 12 months 2019

Counseled by a mental health professional in the past 12 months 2019

Dental exam or cleaning in the past 12 months 2019

Health Insurance Coverage

Lack of health insurance 2001

Public health plan coverage 2002

Private health insurance coverage 2002

Telephone Ownership

Adults living in wireless-only households 2007

Eight (42.1%) of the original 19 Early Release indicators were retained as part of the Early Release Program

(asthma episode, cigarette smoking, did not get needed medical care due to cost, influenza vaccination, lack of

health insurance, public health plan coverage, private health insurance coverage, and adults living in wireless-

only households). Fourteen new measures complemented these topics while also addressing timely and

important aspects of health and well-being, such as functioning and disability, mental health, electronic

cigarette use, and strategies used to reduce prescription drug costs. The selected measures generally reflect

current or recent health status or experiences with the health care system, may be sensitive to policy changes,

and are representative of important issues such as preventive care and cost-related barriers to care. Timely data

on the prevalence of adults living in wireless-only households is important for health survey researchers using

telephone surveys, to ensure that they adequately represent this population in their samples.

While data on each of these 22 measures were available prior to the 2019 questionnaire redesign, changes to

the wording of the questions and response options, changes to the location and context of the questions, and

changes in who answered the questions could affect the estimates and explain (at least in part) any observed

differences between 2018 and 2019. For three of these new Early Release indicators—“workdays missed”,

“dental exam or cleaning” and “counseled by a mental health professional”—the changes to both the location

and wording of the questions were so substantial that no comparison between 2018 and 2019 is recommended.

However, for the remaining 19 indicators, the changes to the location, wording, or respondent were relatively

minor. Nevertheless, for these indicators, a preliminary evaluation was conducted to assess whether the

Preliminary Evaluation of the Impact of 2019 NHIS Questionnaire Redesign and Weighting Adjustments Page | 8

U.S. Department of Health and Human Services ● Centers for Disease Control and Prevention ● National Center for Health Statistics

questionnaire redesign, the weighting process, or both had an observable impact on the overall national

estimates.

Analytic Approach

As described earlier, the goal of this analysis is to determine how the comparability of 2018 and 2019 estimates

may have been affected by the questionnaire redesign and the change in the weighting approach. These effects

can be evaluated by examining differences among prevalence estimates that vary by questionnaire design or

weighting approach. Table 3 presents a series of five prevalence estimates (and 90% confidence intervals) for 19

Early Release indicators:

A. 2018 Production Estimates (Old Weight) – These are the official estimates for the key indicators based

on 2018 data. They were derived using the former (2018) weighting approach. Data from 25,417 sample

adults were used for these estimates.

B. 2018 Quarter 4 Production Estimates (Old Weight) – These estimates are derived in a manner similar to

the 2018 Production estimates, but they are based only on data from 4,074 sample adults that were

interviewed from October-December 2018.

C. 2018 Quarter 4 Bridge Estimates (Old Weight) – From October-December 2018, NCHS conducted a

small-scale test of the redesigned NHIS questionnaire to evaluate procedures and identify potential

problems. This test was conducted nationally; data were collected from a nationally representative

sample of 4,428 sample adults. The overlap in the data collection period of the field test with the 2018

data collection serves as a “bridge” between the two designs, and the two production periods (2018 and

2019). Estimates from this test, derived using the former (2018) weighting approach, are heretofore

referred to as bridge estimates.

D. 2019 Full-year Early Release Estimates (Old Weight) – These estimates are derived in a manner similar

to the official 2019 Early Release estimates, but they are based on application of the former (2018)

weighting approach to the data. Data from 32,391 sample adults were used for these estimates.

E. 2019 Full-year Early Release Estimates (New Weight) – These are the official estimates for the key

indicators based on Early Release data from 2019. They are derived using the updated weighting

approach. Data from 32,391 sample adults were used for these estimates.

Preliminary Evaluation of the Impact of 2019 NHIS Questionnaire Redesign and Weighting Adjustments Page | 9

U.S. Department of Health and Human Services ● Centers for Disease Control and Prevention ● National Center for Health Statistics

Table 3. Key estimates (and 90% confidence intervals) for adults by time period, questionnaire design, and weighting approach

Indicator

(A)

2018

Full-year

Production

(B)

2018

Quarter 4

Production

(C)

2018

Quarter 4

Bridge

(D)

2019 Full-

year Early

Release,

Old weight

(E)

2019 Full-

year Early

Release,

New weight

Within

2018 Q4

Difference

(C - B)

Weighting

Difference

(E - D)

Health Status

Diagnosed hypertension 27.0

(26.4 - 27.6)

27.4

(26.0 – 28.7)

25.6

(24.4 – 26.9)

26.6

(26.0- 27.1)

27.1

(26.6 - 27.7)

-1.8 0.5

Asthma episode 3.5

(3.3 - 3.8)

3.8

(3.2 - 4.5)

3.5

(3.1 - 4.0)

3.3

(3.1 - 3.6)

3.4

(3.1 - 3.6)

-0.3 0.1

Regularly experienced chronic pain 20.7

(20.2 - 21.3)

21.6

(20.3 – 22.9)

19.7

(18.6 – 20.8)

20.0

(19.5 - 20.5)

20.6

(20.1 - 21.2)

-1.9‡ 0.6

Regularly had feelings of worry, nervousness, or anxiety 8.6

(8.2 – 9.0)

9.0

(8.0 - 10.0)

11.1

(10.2 – 12.0)

10.9

(10.6 - 11.3)

11.2

(10.8 - 11.5)

2.1* 0.3

Regularly had feelings of depression 4.4

(4.2 – 4.7)

4.7

(4.1 – 5.4)

4.2

(3.7 – 4.8)

4.6

(4.4 - 4.8)

4.7

(4.5 - 5.0)

-0.5 0.1

Disability status 10.2

(9.8 - 10.6)

9.9

(9.0 - 10.8)

8.9

(8.1 - 9.7)

8.6

(8.3 - 9.0)

9.2

(8.8 - 9.6)

-1.0 0.6‡

Health Behaviors

Current cigarette smoking 13.7

(13.3 - 14.2)

13.3

(12.3 - 14.4)

12.8

(11.8 – 13.8)

13.2

(12.8 - 13.7)

14.2

(13.8 - 14.7)

-0.5 1.0**

Current electronic cigarette use 3.2

(3.0 - 3.5)

3.3

(2.7 – 3.9)

4.3

(3.7 - 5.0)

4.3

(4.0 - 4.6)

4.4

(4.1 - 4.7)

1.0‡ 0.1

Health Care Access

Did not get needed medical care due to cost 6.4

(6.1 - 6.7)

6.3

(5.6 - 7.0)

8.2

(7.2 - 9.3)

7.8

(7.5-8.2)

8.3

(8.0-8.7)

1.9* 0.5‡

Did not take medication as prescribed to save money 9.2

(8.8 - 9.7)

9.2

(8.1 - 10.3)

9.1

(8.2 - 10.2)

9.2

(8.7-9.6)

9.7

(9.2-10.1)

-0.1 0.5

Did not get needed mental health care due to cost 2.6

(2.4 - 2.9)

3.4

(2.8 - 4.0)

4.9

(4.3 - 5.6)

4.4

(4.1-4.6)

4.3

(4.1-4.6)

1.5** -0.1

Health Care Service Utilization

Doctor visit 84.3

(83.8 – 84.9)

85.2

(83.9 - 86.4)

84.2

(82.9 - 85.4)

85.2

(84.8 - 85.6)

84.9

(84.5 - 85.3)

-1.0 -0.3

Hospital emergency department visit 21.3

(20.8 – 21.9)

21.3

(20.0 - 22.6)

21.8

(20.6 - 23.0)

21.3

(20.8 - 21.7)

21.8

(21.4 - 22.3)

0.5 0.5

Influenza vaccination 44.6

(43.9 - 45.3)

44.2

(42.6 – 45.9)

45.0

(43.4 - 46.5)

47.8

(47.0 - 48.5)

46.8

(46.1 - 47.5)

0.8 -1.0

Blood pressure check 84.6

(84.1 - 85.1)

85.8

(84.6 - 87.0)

86.6

(85.4 - 87.7)

88.3

(87.9 - 88.8)

88.1

(87.6 - 88.5)

0.8 -0.2

See footnotes at end of table.

Preliminary Evaluation of the Impact of 2019 NHIS Questionnaire Redesign and Weighting Adjustments Page | 10

U.S. Department of Health and Human Services ● Centers for Disease Control and Prevention ● National Center for Health Statistics

Table 3. Key estimates (and 90% confidence intervals) for adults by time period, questionnaire design, and weighting approach, cont.

Indicator

(A)

2018

Full-year

Production

(B)

2018

Quarter 4

Production

(C)

2018

Quarter 4

Bridge

(D)

2019 Full-

year Early

Release,

Old weight

(E)

2019 Full-

year Early

Release,

New weight

Within

2018 Q4

Difference

(C - B)

Weighting

Difference

(E - D)

Health Insurance Coverage (18-64 years)

1

Lack of health insurance 13.3

(12.6 – 13.9)

14.0

(13.0 - 15.1)

13.8

(12.1 - 15.5)

13.8

(13.2 - 14.4)

14.7

(14.0 - 15.3)

-0.2 0.9‡

Public health plan coverage 19.4

(18.9 - 20.0)

18.6

(17.5 – 19.7)

17.5

(16.2 – 18.8)

19.0

(18.4 - 19.7)

20.4

(19.7 - 21.1)

-1.1 1.4*

Private health insurance coverage 68.9

(68.2 - 69.7)

68.7

(67.3 - 70.1)

71.0

(69.2 – 72.7)

69.0

(68.2 - 69.8)

66.8

(65.9 - 67.7)

2.3‡ -2.2**

Telephone Ownership

Adults living in wireless-only households 56.0

(55.2 - 56.8)

57.0

(55.5 - 58.6)

58.0

(56.5 - 59.5)

59.9

(58.9 - 60.9)

60.2

(59.2 - 61.2)

1.0 0.3

‡

p < 0.10; *p < 0.05; **p < 0.01

1

For the health insurance coverage indicators, the 2018 full-year production and 2018 quarter 4 production estimates are preliminary estimates from the National Health

Interview Survey Early Release Program, rather than final estimates. See text for further information.

SOURCES: NCHS, National Health Interview Survey, 2018, 2018 Quarter 4 Test, and 2019

Preliminary Evaluation of the Impact of 2019 NHIS Questionnaire Redesign and Weighting Adjustments Page | 11

U.S. Department of Health and Human Services ● Centers for Disease Control and Prevention ● National Center for Health Statistics

Health policy analysts and other public health professionals are generally interested in whether there are

changes over time in the official estimates for the key indicators. That is, they will want to know whether the

2019 estimates are higher, lower, or statistically similar to the 2018 estimates. However, as noted earlier, any

differences observed between estimates for 2018 (Column A) and 2019 (Column E) may be partly attributable to

the 2019 NHIS questionnaire redesign, the updated weighting approach, or both, or they may reflect an actual

change over time. If questionnaire design effects or weighting effects are observable, it may not be appropriate

to compare the 2018 and 2019 estimates and conclude that the observed difference (or lack of difference)

reflects an actual change (or lack of change) over time. Health policy analysts are also interested in longer term

trends in addition to year-to-year changes. While design or weighting changes may affect year-to-year

comparisons, these changes may have less impact on ascertaining longer term trends.

Table 3 highlights two comparisons of interest in assessing the potential effect of the questionnaire redesign and

updated weighting approach on the official estimates. First, for each indicator, the potential effect of the

questionnaire design was examined by comparing estimates across designs during October-December 2018, a

time when both the prior design and the field test of the redesign were in the field simultaneously. That is, the

2018 Quarter 4 Production estimate (Column B) was compared to the 2018 Quarter 4 Bridge estimate (Column

C), and the statistical significance was tested. The same weighting approach was used for both data sets.

Second, for each indicator, the potential impact of the updated weighting approach was examined by comparing

estimates derived with the two different weighting approaches for the same data set. That is, the 2019 Full-year

Early Release estimate based on the old weight (Column D) was compared to the 2019 Full-year Early Release

estimate based on the new weight (Column E), and the statistical significance was tested. In this analysis, both

estimates are derived from the same questionnaire design.

Third, for each indicator, the potential impact of both the questionnaire redesign and updated weighting

approach on longer-term trends was explored by examining changes in six-month interval estimates from 2015-

2019. These line graphs, along with the information presented in Table 3, help evaluate longer-term trends

through 2019. The figures show the observed estimates for each time period and the predicted estimate if no

methodological changes had been made based on the net effect of the design and weighting changes. Note that

the predicted estimates are subject to sampling error (not shown on the figures) and will differ from what would

have been observed had no changes been made in 2019. Conclusions as to the extent of the impact of the

design and weighting changes on trends or differences between 2018 and 2019 should take this into

consideration. Detailed information on the effect of the methodologic changes is provided so readers can reach

their own conclusions as to how to interpret trends. The comparison between the January-June 2019 and July-

December 2019 estimates describes any change occurring during the calendar year. This information can be

used in conjunction with changes during the previous period to determine whether an existing increasing or

decreasing trend may have continued through 2019 in light of possible methodological impacts.

All statistical tests used a two-sided alpha level of 0.10 rather than the conventional 0.05 level to determine

statistical significance. This decision leads to identification of more significant differences and more conservative

conclusions. That is, one is more likely to conclude that questionnaire design effects and weighting effects exist

for a greater number of variables. This approach is a reasonably cautious one, suitable for this look at

preliminary data and the relatively small sample sizes available for the 2018 Quarter 4 comparisons. Readers

who wish to use an alternative approach will find the actual p-values identified in the text and footnotes for

alpha levels of 0.05 and 0.01 in the tables.

Reports from the Early Release Program for key health indicators have generally presented both unadjusted

(crude) and age-adjusted estimates in tables, but only unadjusted estimates when examining trends over time.

Similarly, the estimates and comparisons presented here are based on unadjusted estimates only.

Preliminary Evaluation of the Impact of 2019 NHIS Questionnaire Redesign and Weighting Adjustments Page | 12

U.S. Department of Health and Human Services ● Centers for Disease Control and Prevention ● National Center for Health Statistics

Results

Diagnosed Hypertension

Questionnaire changes between 2018 and 2019: This indicator is based on a series of questions that ask sample

adult respondents if they have a) ever been told by a doctor or health professional that they had hypertension,

b) been told on two or more different visits that they had hypertension, c) had hypertension during the past 12

months, or d) are now taking any medication prescribed by a doctor for their high blood pressure. In 2018, there

was an additional question that asked whether any medicine was ever prescribed by a doctor for their high

blood pressure. This additional question preceded the current medication question, and the current medication

question was only asked among those that responded yes to the preceding question. The 2019 NHIS did not ask

about whether medicine was ever prescribed by a doctor but included the other four questions as in 2018.

This indicator is new to the Early Release Program and reflects sample adults who either a) had been told they

had hypertension on two or more doctor visits and had hypertension during the past 12 months, or b) had ever

had hypertension and are now taking medication for their high blood pressure.

(A)

2018

Full-year

Production

(B)

2018

Quarter 4

Production

(C)

2018

Quarter 4

Bridge

(D)

2019 Full-year

Early Release,

Old weight

(E)

2019 Full-year

Early Release,

New weight

(F)

Within 2018

Q4 Difference

(C - B)

(G)

Weighting

Difference

(E - D)

Combined

Impact

(F + G)

27.0

(26.4 - 27.6)

27.4

(26.0 – 28.7)

25.6

(24.4 – 26.9)

26.6

(26.0- 27.1)

27.1

(26.6 - 27.7)

-1.8 0.5 -1.3

Preliminary analysis:

• In 2018, the percentage of adults who had diagnosed hypertension in the past 12 months or were taking

medication to control it was 27.0%. In 2019, the full-year estimate with the new weight was 27.1% (see

Table). This difference was not statistically significant (p = 0.84).

• During the fourth quarter of 2018, the production estimate (27.4%) was 1.8 percentage points higher

than the bridge estimate (25.6%). This difference suggests a possible questionnaire design effect, but it

did not reach statistical significance (p = 0.11).

• The 2019 full-year estimate with the old weight (26.6%) was lower than, but not significantly different

from, the 2019 full-year estimate with the new weight (27.1%). This result (p = 0.28) suggests that the

weighting adjustment was unlikely to affect estimates for this indicator.

• Considered together, estimates for 2019 may have shifted downward by 1.3 percentage points due to

the methodological change. This shift may have masked an actual increase from 2018 to 2019.

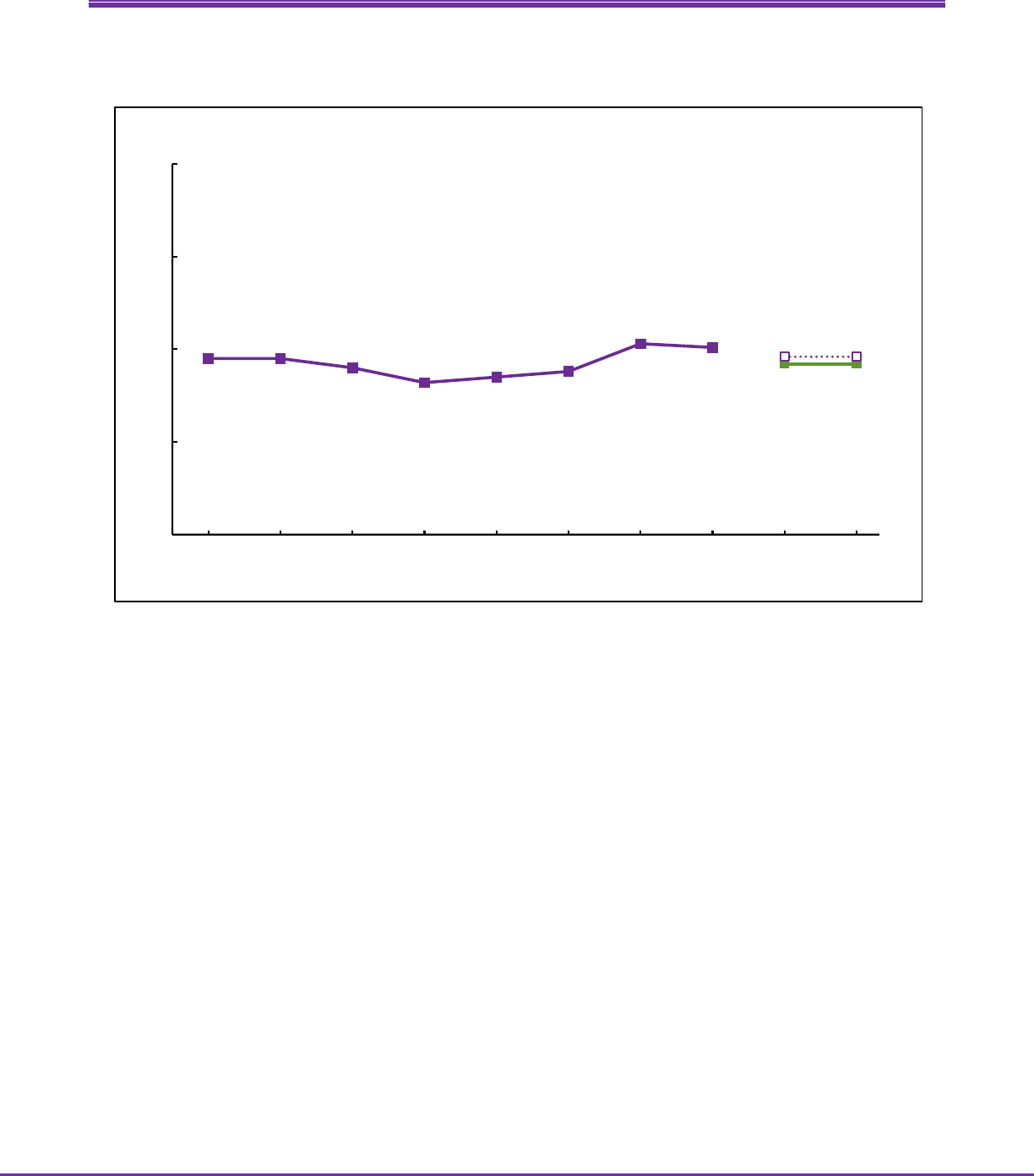

• There is no clear trend from 2015 to 2018 (see Figure). Given the potential impact of the change in the

methodology, it is not possible to determine if there was an actual increase between 2018 and 2019.

• The observed increase from January-June 2019 (26.8%) to July-December 2019 (27.5%) would be

consistent with an increase starting in 2018. However, the observed increase between the two six-

month estimates was not statistically significant (p = 0.26).

Preliminary Evaluation of the Impact of 2019 NHIS Questionnaire Redesign and Weighting Adjustments Page | 13

U.S. Department of Health and Human Services ● Centers for Disease Control and Prevention ● National Center for Health Statistics

Percentage of adults with diagnosed hypertension

27.1

26.1

26.6

26.9 26.9

26.1

27.2

26.9

26.8

27.5

28.1

28.8

0

5

10

15

20

25

30

35

40

Jan-June

2015

July-Dec

2015

Jan-June

2016

July-Dec

2016

Jan-June

2017

July-Dec

2017

Jan-June

2018

July-Dec

2018

Jan-June

2019

July-Dec

2019

SOURCE: NCHS, National Health Interview Survey.

Percent

Predicted values in

the absence of

methodological

changes

Final estimates, 2015-2018

Early Release

estimates, 2019

Preliminary Evaluation of the Impact of 2019 NHIS Questionnaire Redesign and Weighting Adjustments Page | 14

U.S. Department of Health and Human Services ● Centers for Disease Control and Prevention ● National Center for Health Statistics

Asthma Episode

Questionnaire changes between 2018 and 2019: The two questions used for this indicator were unchanged

from 2018 to 2019. Sample adult respondents were asked, “Have you ever been told by a doctor or other health

professional that you had asthma?” If yes, they were asked “During the past 12 months, have you had an

episode of asthma or an asthma attack?”

This indicator is one of eight from the original Early Release Program and reflects sample adults who had an

asthma episode or attack in the past 12 months.

(A)

2018

Full-year

Production

(B)

2018

Quarter 4

Production

(C)

2018

Quarter 4

Bridge

(D)

2019 Full-year

Early Release,

Old weight

(E)

2019 Full-year

Early Release,

New weight

(F)

Within 2018

Q4 Difference

(C - B)

(G)

Weighting

Difference

(E - D)

Combined

Impact

(F + G)

3.5

(3.3 - 3.8)

3.8

(3.2 - 4.5)

3.5

(3.1 - 4.0)

3.3

(3.1 - 3.6)

3.4

(3.1 - 3.6)

-0.3 0.1 -0.2

Preliminary analysis:

• In 2018, the percentage of adults who had an asthma episode or attack in the past 12 months was 3.5%.

The 2019 full-year estimate with the new weight was 3.4% (see Table). This difference was not

statistically significant (p = 0.61).

• During the fourth quarter of 2018, the production and bridge estimates for this indicator were similar

(3.8% and 3.5%, respectively). This result (p = 0.53) suggests that a questionnaire design effect is

unlikely.

• The 2019 full-year estimates using the old and new weights were similar (3.3% and 3.4%, respectively).

This result (p = 0.60) suggests that the weighting adjustment did not substantially affect estimates for

this indicator.

• Considered together, estimates for 2019 may have shifted downward by 0.2 percentage points due to

the methodological change.

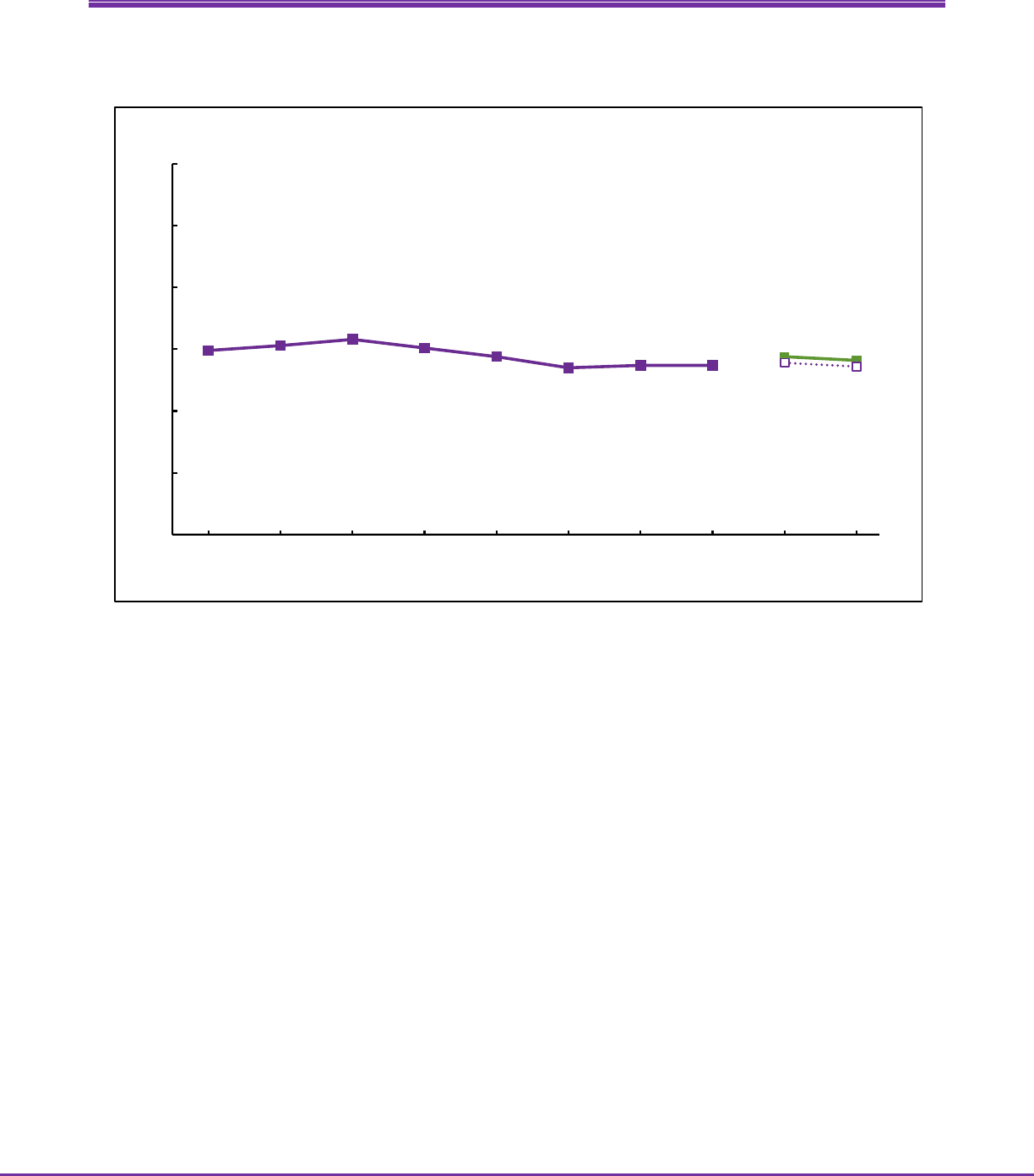

• The prevalence of an asthma episode or attack declined from 2016 through 2017. There has been no

clear trend since 2017 (see Figure).

• There was no significant change from January-June 2019 (3.4%) to July-December 2019 (3.3%, p = 0.44).

This is consistent with the absence of a trend since 2017.

Preliminary Evaluation of the Impact of 2019 NHIS Questionnaire Redesign and Weighting Adjustments Page | 15

U.S. Department of Health and Human Services ● Centers for Disease Control and Prevention ● National Center for Health Statistics

Percentage of adults who had an asthma episode or attack in the past 12 months

4.0

3.5

4.1

3.9

3.7

3.4

3.5

3.6

3.4

3.3

3.6

3.5

0

2

4

6

8

10

Jan-June

2015

July-Dec

2015

Jan-June

2016

July-Dec

2016

Jan-June

2017

July-Dec

2017

Jan-June

2018

July-Dec

2018

Jan-June

2019

July-Dec

2019

SOURCE: NCHS, National Health Interview Survey.

Percent

Predicted values in

the absence of

methodological

changes

Final estimates, 2015-2018

Early Release

estimates, 2019

Preliminary Evaluation of the Impact of 2019 NHIS Questionnaire Redesign and Weighting Adjustments Page | 16

U.S. Department of Health and Human Services ● Centers for Disease Control and Prevention ● National Center for Health Statistics

Regularly Experienced Chronic Pain

Questionnaire changes between 2018 and 2019: While the question text for this indicator was unchanged from

2018 to 2019, the context surrounding this question changed. Sample adult respondents were asked, “In the

past three months, how often did you have pain? Would you say never, some days, most days, or every day?” In

2018, this question appeared in the section entitled Adult Functioning and Disability (AFD) and was at the end of

the sample adult interview. In 2019, this question was the first in a series of questions on chronic pain, and the

chronic pain section was a little more than halfway through the sample adult interview.

This indicator is new to the Early Release Program and reflects sample adults who experienced pain either ‘every

day’ or ‘most days’.

(A)

2018

Full-year

Production

(B)

2018

Quarter 4

Production

(C)

2018

Quarter 4

Bridge

(D)

2019 Full-year

Early Release,

Old weight

(E)

2019 Full-year

Early Release,

New weight

(F)

Within 2018

Q4 Difference

(C - B)

(G)

Weighting

Difference

(E - D)

Combined

Impact

(F + G)

20.7

(20.2 - 21.3)

21.6

(20.3 – 22.9)

19.7

(18.6 – 20.8)

20.0

(19.5 - 20.5)

20.6

(20.1 - 21.2)

-1.9‡ 0.6 -1.3

Preliminary analysis:

• In 2018, the percentage of adults who regularly experienced chronic pain was 20.7%. The 2019 full-year

estimate with the new weight was 20.6% (see Table). This difference was not statistically significant (p =

0.83).

• During the fourth quarter of 2018, the production estimate (21.6%) was 1.9 percentage points higher

than the bridge estimate (19.7%). This difference was statistically significant (p = 0.07), indicating that a

questionnaire design effect is likely.

• The 2019 full-year estimate with the old weight (20.0%) was lower than, but not significantly different

from, the 2019 full-year estimate with the new weight (20.6%). This result (p = 0.18) suggests that the

weighting adjustment was unlikely to affect estimates for this indicator.

• Considered together, estimates for 2019 may have shifted downward by 1.3 percentage points due to

the methodological change. This shift may have masked an actual increase from 2018 to 2019.

• The prevalence of chronic pain increased from July-December 2016 (17.9%) through July-December

2018 (21.1%; see Figure). Given the impact of the change in methodology, it is not possible to determine

if there was an increase from July-December 2016 and into 2019.

• There was no change from January-June 2019 (20.6%) to July-December 2019 (20.6%, p = 0.99)

indicating that if there was an increasing trend starting in July-December 2016, it did not continue

through 2019.

Preliminary Evaluation of the Impact of 2019 NHIS Questionnaire Redesign and Weighting Adjustments Page | 17

U.S. Department of Health and Human Services ● Centers for Disease Control and Prevention ● National Center for Health Statistics

Percentage of adults who regularly experienced chronic pain

19.5

19.6

19.1

17.9

18.4

18.2

20.4

21.1

20.6 20.6

21.9 21.9

0

5

10

15

20

25

30

Jan-June

2015

July-Dec

2015

Jan-June

2016

July-Dec

2016

Jan-June

2017

July-Dec

2017

Jan-June

2018

July-Dec

2018

Jan-June

2019

July-Dec

2019

SOURCE: NCHS, National Health Interview Survey.

Percent

Predicted values in

the absence of

methodological

changes

Final estimates, 2015-2018

Early Release

estimates, 2019

Preliminary Evaluation of the Impact of 2019 NHIS Questionnaire Redesign and Weighting Adjustments Page | 18

U.S. Department of Health and Human Services ● Centers for Disease Control and Prevention ● National Center for Health Statistics

Regularly Had Feelings of Worry, Nervousness, or Anxiety

Questionnaire changes between 2018 and 2019: This indicator is based on responses to two questions: a) “How

often do you feel worried, nervous, or anxious? Would you say daily, weekly, monthly, a few times a year, or

never?”; and b) “Thinking about the last time you felt worried, nervous, or anxious, how would you describe the

level of these feelings? Would you say a little, a lot, or somewhere in between?” While the text of the questions

for this indicator was unchanged from 2018 to 2019, the context surrounding these questions changed. In 2018,

these questions were included in the AFD section, a section at the end of the sample adult interview. In 2019,

these questions were included at the beginning of a series of questions on mental health and towards the

middle of the sample adult interview.

This indicator is new to the Early Release Program and reflects sample adults who had feelings of worry,

nervousness, or anxiety a) daily and report the level as ‘a lot’ or ‘somewhere in between’, or b) weekly and

report the level as ‘a lot’.

(A)

2018

Full-year

Production

(B)

2018

Quarter 4

Production

(C)

2018

Quarter 4

Bridge

(D)

2019 Full-year

Early Release,

Old weight

(E)

2019 Full-year

Early Release,

New weight

(F)

Within 2018

Q4 Difference

(C - B)

(G)

Weighting

Difference

(E - D)

Combined

Impact

(F + G)

8.6

(8.2 – 9.0)

9.0

(8.0 - 10.0)

11.1

(10.2 – 12.0)

10.9

(10.6 - 11.3)

11.2

(10.8 - 11.5)

2.1* 0.3 2.4

Preliminary analysis:

• In 2018, the percentage of adults who regularly had feelings of worry, nervousness, or anxiety was 8.6%.

The 2019 full-year estimate with the new weight was 11.2% (see Table). This observed increase was

statistically significant (p < 0.001).

• During the fourth quarter of 2018, the production estimate (9.0%) was 2.1 percentage points lower than

the bridge estimate (11.1%). This difference was statistically significant (p = 0.01) indicating that a

questionnaire design effect is likely.

• The 2019 full-year estimates using the old and new weights were similar (10.9% and 11.2%,

respectively). This result (p = 0.36) suggests that the weighting adjustment did not substantially affect

estimates for this indicator.

• Considered together, estimates for 2019 may have shifted upward by 2.4 percentage points due to the

methodological change. This shift may account for the observed increase from 8.6% in 2018 to 11.2% in

2019.

• The percentage of adults who regularly had feelings of worry, nervousness, or anxiety increased from

2016 through 2017 (see Figure). There was no clear trend from July-December 2017 to July-December

2018. If the observed increase in 2019 does not indicate a real increase but reflects the methodological

effects described above, there was also no clear trend into 2019.

• There was no significant change from January-June 2019 (11.0%) to July-December 2019 (11.3%, p =

0.60). This is consistent with the absence of a trend since 2017.

Preliminary Evaluation of the Impact of 2019 NHIS Questionnaire Redesign and Weighting Adjustments Page | 19

U.S. Department of Health and Human Services ● Centers for Disease Control and Prevention ● National Center for Health Statistics

Percentage of adults who regularly had feelings of worry, nervousness, or anxiety

7.1

7.8

7.1

7.6

7.9

8.9

8.5

8.7

11.0

11.3

8.6

8.9

0

5

10

15

20

Jan-June

2015

July-Dec

2015

Jan-June

2016

July-Dec

2016

Jan-June

2017

July-Dec

2017

Jan-June

2018

July-Dec

2018

Jan-June

2019

July-Dec

2019

SOURCE: NCHS, National Health Interview Survey.

Percent

Predicted values in

the absence of

methodological

changes

Final estimates, 2015-2018

Early Release

estimates, 2019

Preliminary Evaluation of the Impact of 2019 NHIS Questionnaire Redesign and Weighting Adjustments Page | 20

U.S. Department of Health and Human Services ● Centers for Disease Control and Prevention ● National Center for Health Statistics

Regularly Had Feelings of Depression

Questionnaire changes between 2018 and 2019: This indicator is based on responses to two questions: a) “How

often do you feel depressed? Would you say daily, weekly, monthly, a few times a year, or never?”; and b)

“Thinking about the last time you felt depressed, how depressed did you feel? Would you say a little, a lot, or

somewhere in between?” While the text of the questions for this indicator was unchanged from 2018 to 2019,

the context surrounding these questions changed. In 2018, these questions were included in the AFD section, a

section at the end of the sample adult interview. In 2019, these questions were included early in a series of

questions on mental health and towards the middle of the sample adult interview. One matter of context did

not change: These questions have always followed the questions on feeling worried, nervous, or anxious.

This indicator is new to the Early Release Program and reflects sample adults who had feelings of depression a)

daily and report the level as ‘a lot’ or ‘somewhere in between’, or b) weekly and report the level as ‘a lot’.

(A)

2018

Full-year

Production

(B)

2018

Quarter 4

Production

(C)

2018

Quarter 4

Bridge

(D)

2019 Full-year

Early Release,

Old weight

(E)

2019 Full-year

Early Release,

New weight

(F)

Within 2018

Q4 Difference

(C - B)

(G)

Weighting

Difference

(E - D)

Combined

Impact

(F + G)

4.4

(4.2 – 4.7)

4.7

(4.1 – 5.4)

4.2

(3.7 – 4.8)

4.6

(4.4 - 4.8)

4.7

(4.5 - 5.0)

-0.5 0.1 -0.4

Preliminary analysis:

• In 2018, the percentage of adults who regularly had feelings of depression was 4.4%. The 2019 full-year

estimate with the new weight was 4.7% (see Table). This difference was not statistically significant (p =

0.17).

• During the fourth quarter of 2018, the production estimate (4.7%) was higher than, but not significantly

different from, the bridge estimate (4.2%). This result (p = 0.33) suggests that a questionnaire design

effect is unlikely.

• The 2019 full-year estimates using the old and new weights were similar (4.6% and 4.7%, respectively).

This result (p = 0.63) suggests that the weighting adjustment did not substantially affect estimates for

this indicator.

• Considered together, estimates for 2019 may have shifted downward by 0.4 percentage points due to

the methodological change. Though this shift was not statistically significant, it may have partially

masked an actual increase from 2018 to 2019.

• The percentage of adults who regularly had feelings of depression increased from 2016 through 2018

(see Figure). The increasing trend may have continued into 2019.

• However, there was no change from January-June 2019 (4.7%) to July-December 2019 (4.7%, p = 0.89)

indicating that if there was an increasing trend starting in 2016 it did not continue throughout calendar

year 2019.

Preliminary Evaluation of the Impact of 2019 NHIS Questionnaire Redesign and Weighting Adjustments Page | 21

U.S. Department of Health and Human Services ● Centers for Disease Control and Prevention ● National Center for Health Statistics

Percentage of adults who regularly had feelings of depression

3.8 3.8

3.4

3.8

3.9

4.0

4.4 4.4

4.7 4.7

5.1 5.1

0

2

4

6

8

10

Jan-June

2015

July-Dec

2015

Jan-June

2016

July-Dec

2016

Jan-June

2017

July-Dec

2017

Jan-June

2018

July-Dec

2018

Jan-June

2019

July-Dec

2019

SOURCE: NCHS, National Health Interview Survey.

Percent

Predicted values in

the absence of

methodological

changes

Final estimates, 2015-2018

Early Release

estimates, 2019

Preliminary Evaluation of the Impact of 2019 NHIS Questionnaire Redesign and Weighting Adjustments Page | 22

U.S. Department of Health and Human Services ● Centers for Disease Control and Prevention ● National Center for Health Statistics

Disability Status

Questionnaire changes between 2018 and 2019: While the questions regarding disability did not change

between 2018 and 2019, their location in the interview changed. Similar to the questions on chronic pain,

feelings of worry, nervousness, or anxiety, and feelings of depression, the questions that form the disability

composite variable were included in the AFD section and at the end of the sample adult interview in 2018.

Starting in 2019, these questions moved into the first half of the sample adult interview.

This indicator is new to the Early Release Program. It is defined by the reported level of difficulty (no difficulty,

some difficulty, a lot of difficulty, or cannot do at all) in six functioning domains: seeing (even if wearing glasses),

hearing (even if wearing hearing aids), mobility (walking and climbing stairs), communication (understanding or

being understood by others), cognition (remembering or concentrating), and self-care (such as washing all over

or dressing). Sample adults who responded “a lot of difficulty” or “cannot do at all” to at least one question

were considered to have a disability.

(A)

2018

Full-year

Production

(B)

2018

Quarter 4

Production

(C)

2018

Quarter 4

Bridge

(D)

2019 Full-year

Early Release,

Old weight

(E)

2019 Full-year

Early Release,

New weight

(F)

Within 2018

Q4 Difference

(C - B)

(G)

Weighting

Difference

(E - D)

Combined

Impact

(F + G)

10.2

(9.8 - 10.6)

9.9

(9.0 - 10.8)

8.9

(8.1 - 9.7)

8.6

(8.3 - 9.0)

9.2

(8.8 - 9.6)

-1.0 0.6‡ -0.4

Preliminary analysis:

• In 2018, the percentage of adults with disability was 10.2%. The 2019 full-year estimate with the new

weight was 9.2% (see Table). This observed decrease was statistically significant (p = 0.003).

• During the fourth quarter of 2018, the production estimate (9.9%) was 1.0 percentage points higher

than the bridge estimate (8.9%). This difference suggests a possible questionnaire design effect, but it

did not reach statistical significance (p = 0.16).

• The 2019 full-year estimate using the old weight (8.6%) was 0.6 percentage points lower than the 2019

full-year estimate using the new weight (9.2%). This difference was statistically significant (p = 0.05)

indicating that estimates for this indicator were likely affected by the change in the weighting approach.

• Considered together, estimates for 2019 may have shifted downward by 0.4 percentage points due to

the methodological change. This small shift due to methodological changes may account for some of the

observed decrease from 10.2% in 2018 to 9.2% in 2019.

• The percentage of adults with disability increased from July-December 2016 (8.2%) through July-

December 2018 (10.1%) (see Figure). There is no indication that this trend has continued through 2019

and there might have been a decrease between 2018 and 2019.

• There was no change from January-June 2019 (9.2%) to July-December 2019 (9.2%, p = 0.82). This could

indicate that any decrease in 2019 did not continue through the year.

Preliminary Evaluation of the Impact of 2019 NHIS Questionnaire Redesign and Weighting Adjustments Page | 23

U.S. Department of Health and Human Services ● Centers for Disease Control and Prevention ● National Center for Health Statistics

Percentage of adults with disability

9.5 9.5

9.0

8.2

8.5

8.8

10.3

10.1

9.2 9.2

9.6 9.6

0

5

10

15

20

Jan-June

2015

July-Dec

2015

Jan-June

2016

July-Dec

2016

Jan-June

2017

July-Dec

2017

Jan-June

2018

July-Dec

2018

Jan-June

2019

July-Dec

2019

SOURCE: NCHS, National Health Interview Survey.

Percent

Predicted values in

the absence of

methodological

changes

Final estimates, 2015-2018

Early Release

estimates, 2019

Preliminary Evaluation of the Impact of 2019 NHIS Questionnaire Redesign and Weighting Adjustments Page | 24

U.S. Department of Health and Human Services ● Centers for Disease Control and Prevention ● National Center for Health Statistics

Current Cigarette Smoking

Questionnaire changes between 2018 and 2019: The two questions used for this indicator were unchanged

from 2018 to 2019. Sample adult respondents were asked, “Have you smoked at least 100 cigarettes in your

entire life?” If yes, they were asked “Do you now smoke cigarettes every day, some days, or not at all?”

This indicator is one of eight from the original Early Release Program and reflects sample adults who smoke

cigarettes ‘every day’ or ‘some days’.

(A)

2018

Full-year

Production

(B)

2018

Quarter 4

Production

(C)

2018

Quarter 4

Bridge

(D)

2019 Full-year

Early Release,

Old weight

(E)

2019 Full-year

Early Release,

New weight

(F)

Within 2018

Q4 Difference

(C - B)

(G)

Weighting

Difference

(E - D)

Combined

Impact

(F + G)

13.7

(13.3 - 14.2)

13.3

(12.3 - 14.4)

12.8

(11.8 – 13.8)

13.2

(12.8 - 13.7)

14.2

(13.8 - 14.7)

-0.5 1.0** 0.5

Preliminary analysis:

• In 2018, the percentage of adults who were current cigarette smokers was 13.7%. The 2019 full-year

estimate with the new weight was 14.2% (see Table). This observed increase was not statistically

significant (p = 0.19).

• During the fourth quarter of 2018, the production estimate (13.3%) was higher than, but not

significantly different from, the bridge estimate (12.8%). This result (p = 0.57) suggests that a

questionnaire design effect is unlikely.

• The 2019 full-year estimate using the old weight (13.2%) was 1.0 percentage points lower than the 2019

full-year estimate using the new weight (14.2%). This difference was statistically significant (p = 0.005),

indicating that estimates for this indicator were likely affected by the change in the weighting approach.

• Considered together, estimates for 2019 may have shifted upward by 0.5 percentage points. This small

shift due to methodological changes may account for the observed increase from 13.7% in 2018 to

14.2% in 2019.

• The prevalence of current cigarette smoking declined from 2016 through 2017. There has been no clear

trend since then (see Figure). After taking the methodological changes into account, it appears that

there remains no change since July-December 2017 in the prevalence of cigarette smoking.

• There was no significant change from January-June 2019 (14.4%) to July-December 2019 (14.1%, p =

0.65). This is consistent with the absence of a trend since July-December 2017.

Preliminary Evaluation of the Impact of 2019 NHIS Questionnaire Redesign and Weighting Adjustments Page | 25

U.S. Department of Health and Human Services ● Centers for Disease Control and Prevention ● National Center for Health Statistics

Percentage of adults who were current cigarette smokers

14.9

15.3

15.8

15.1

14.4

13.5

13.7 13.7

14.4

14.1

13.9

13.6

0

5

10

15

20

25

30

Jan-June

2015

July-Dec

2015

Jan-June

2016

July-Dec

2016

Jan-June

2017

July-Dec

2017

Jan-June

2018

July-Dec

2018

Jan-June

2019

July-Dec

2019

SOURCE: NCHS, National Health Interview Survey.

Percent

Predicted values in

the absence of

methodological

changes

Final estimates, 2015-2018

Early Release

estimates, 2019

Preliminary Evaluation of the Impact of 2019 NHIS Questionnaire Redesign and Weighting Adjustments Page | 26

U.S. Department of Health and Human Services ● Centers for Disease Control and Prevention ● National Center for Health Statistics

Current Electronic Cigarette Use

Questionnaire changes between 2018 and 2019: The two questions used for this indicator changed slightly from

2018 to 2019 (see underlined text for additions). In 2019, sample adult respondents were asked “Have you ever

used an e-cigarette or other electronic vaping product, even just one time, in your entire life?” If yes, they were

asked “Do you now use e-cigarettes or other electronic vaping products every day, some days, or not at all?” In

2018, the question was preceded with a lengthy definition of the term ‘e-cigarettes.’ In 2019, that introduction

was not present.

This indicator is new to the Early Release Program and reflects sample adults who said that they use electronic

cigarettes ‘some days’ or ‘every day’.

(A)

2018

Full-year

Production

(B)

2018

Quarter 4

Production

(C)

2018

Quarter 4

Bridge

(D)

2019 Full-year

Early Release,

Old weight

(E)

2019 Full-year

Early Release,

New weight

(F)

Within 2018

Q4 Difference

(C - B)

(G)

Weighting

Difference

(E - D)

Combined

Impact

(F + G)

3.2

(3.0 - 3.5)

3.3

(2.7 – 3.9)

4.3

(3.7 - 5.0)

4.3

(4.0 - 4.6)

4.4

(4.1 - 4.7)

1.0‡ 0.1 1.1

Preliminary analysis:

• In 2018, the percentage of adults who were current electronic cigarette users was 3.2%. The 2019 full-

year estimate with the new weight was 4.4% (see Table). This observed increase was statistically

significant (p < 0.001).

• During the fourth quarter of 2018, the production estimate (3.3%) was 1.0 percentage points lower than

the bridge estimate (4.3%). This difference was statistically significant (p = 0.06) indicating that a

questionnaire design effect is likely.

• The 2019 full-year estimates using the old and new weights were similar (4.3% and 4.4%, respectively).

This result (p = 0.68) suggests that the weighting adjustment did not substantially affect estimates for

this indicator.

• Considered together, estimates for 2019 may have shifted upward by 1.1 percentage points due to the

methodological change. This shift due to methodological changes may account for the observed

increase from 3.2% in 2018 to 4.4% in 2019.

• The prevalence of electronic cigarette use increased from 2017 through 2018 (see Figure). Given the

impact of the change in the methodology, it is not possible to determine if there was any change

between 2018 and 2019.

• There was no change from January-June 2019 (4.4%) and July-December 2019 (4.4%, p = 0.99). This is

consistent with the conclusion that the increasing trend from 2017 to 2018 did not continue through

2019.

Preliminary Evaluation of the Impact of 2019 NHIS Questionnaire Redesign and Weighting Adjustments Page | 27

U.S. Department of Health and Human Services ● Centers for Disease Control and Prevention ● National Center for Health Statistics

Percentage of adults who were current electronic cigarette users

3.2

3.7

3.5

2.9

2.8 2.8

2.9

3.6

4.4 4.4

3.3 3.3

0

2

4

6

8

Jan-June

2015

July-Dec

2015

Jan-June

2016

July-Dec

2016

Jan-June

2017

July-Dec

2017

Jan-June

2018

July-Dec

2018

Jan-June

2019

July-Dec

2019

SOURCE: NCHS, National Health Interview Survey.

Percent

Predicted values in

the absence of

methodological

changes

Final estimates, 2015-2018

Early Release

estimates, 2019

Preliminary Evaluation of the Impact of 2019 NHIS Questionnaire Redesign and Weighting Adjustments Page | 28

U.S. Department of Health and Human Services ● Centers for Disease Control and Prevention ● National Center for Health Statistics

Did Not Get Needed Medical Care Due to Cost

Questionnaire changes between 2018 and 2019: Prior to 2019, this question was asked of the family

respondent during the family core interview. As part of the questionnaire redesign, this question is now directly

asked of the sample adult, but the question itself did not change. The sample adult is asked “During the past 12

months, was there any time when you needed medical care but did not get it because of the cost?”

This indicator is one of eight from the original Early Release Program and reflects sample adults who needed

medical care in the past 12 months but did not get it because of cost.

(A)

2018

Full-year

Production

(B)

2018

Quarter 4

Production

(C)

2018

Quarter 4

Bridge

(D)

2019 Full-year

Early Release,

Old weight

(E)

2019 Full-year

Early Release,

New weight

(F)

Within 2018

Q4 Difference

(C - B)

(G)

Weighting

Difference

(E - D)

Combined

Impact

(F + G)

6.4

(6.1 - 6.7)

6.3

(5.6 - 7.0)

8.2

(7.2 - 9.3)

7.8

(7.5-8.2)

8.3

(8.0-8.7)

1.9* 0.5‡ 2.4

Preliminary analysis:

• In 2018, the percentage of adults who did not get needed medical care due to cost in the past 12

months was 6.4%. The 2019 full-year estimate with the new weight was 8.3% (see Table). This observed

increase was statistically significant (p < 0.001).

• During the fourth quarter of 2018, the production estimate (6.3%) was 1.9 percentage points lower than

the bridge estimate (8.2%). This difference was statistically significant (p = 0.01), indicating that a

questionnaire design effect is likely.

• The 2019 full-year estimate using the old weight (7.8%) was 0.5 percentage points lower than the 2019

full-year estimate using the new weight (8.3%). This difference was statistically significant (p = 0.08);

estimates for this indicator were likely affected by the change in the weighting approach.

• Considered together, estimates for 2019 may have shifted upward by 2.4 percentage points due to the

methodological change. This shift due to methodological changes may account for the observed

increase from 6.4% in 2018 to 8.3% in 2019.

• There is no clear trend from 2015 to 2018 in the percentage of adults who did not get needed medical

care due to cost (see Figure). Given the impact of the change in methodology, it is not possible to

determine if there was an actual increase between 2018 and 2019.

• The increase from January-June 2019 (7.6%) to July-December 2019 (9.0%) was statistically significant (p

= 0.001). It is possible that 2019 is the first year of an increasing trend.

Preliminary Evaluation of the Impact of 2019 NHIS Questionnaire Redesign and Weighting Adjustments Page | 29

U.S. Department of Health and Human Services ● Centers for Disease Control and Prevention ● National Center for Health Statistics

Percentage of adults who did not get needed medical care in the past 12 months due to cost

5.9

6.2

6.0

6.1

5.8

5.9

6.3

6.5

7.6

9.0

5.2

6.6

0

2

4

6

8

10

12

Jan-June

2015

July-Dec

2015

Jan-June

2016

July-Dec

2016

Jan-June

2017

July-Dec

2017

Jan-June

2018

July-Dec

2018

Jan-June

2019

July-Dec

2019

SOURCE: NCHS, National Health Interview Survey.

Percent

Predicted values in

the absence of

methodological

changes

Final estimates, 2015-2018

Early Release

estimates, 2019

Preliminary Evaluation of the Impact of 2019 NHIS Questionnaire Redesign and Weighting Adjustments Page | 30

U.S. Department of Health and Human Services ● Centers for Disease Control and Prevention ● National Center for Health Statistics

Did Not Take Medication as Prescribed to Save Money

Questionnaire changes between 2018 and 2019: The initial question in the series of questions for this indicator

was modified slightly during the questionnaire redesign. Prior to 2019, sample adult respondents were asked

“During the past 12 months, were you prescribed medication by a doctor or other health professional?” As part

of the questionnaire redesign, sample adults were asked “At any time in the past 12 months, did you take

prescription medication?” Sample adults who answered yes were then asked the following series of yes-or-no

questions: “During the past 12 months, were any of the following true for you? 1) You skipped medication doses

to save money; 2) You took less medication to save money; 3) You delayed filling a prescription to save money.”

This indicator is new to the Early Release Program and reflects sample adults who gave an affirmative response

to at least one of these latter three questions.

(A)

2018

Full-year

Production

(B)

2018

Quarter 4

Production

(C)

2018

Quarter 4

Bridge

(D)

2019 Full-year

Early Release,

Old weight

(E)

2019 Full-year

Early Release,

New weight

(F)

Within 2018

Q4 Difference

(C - B)

(G)

Weighting

Difference

(E - D)

Combined

Impact

(F + G)

9.2

(8.8 - 9.7)

9.2

(8.1 - 10.3)

9.1

(8.2 - 10.2)

9.2

(8.7-9.6)

9.7

(9.2-10.1)

-0.1 0.5 0.4

Preliminary analysis:

• In 2018, the percentage of adults who did not take medication as prescribed to save money in the past

12 months was 9.2%. The 2019 full-year estimate with the new weight was 9.7% (see Table). This

observed increase was not statistically significant (p = 0.23).

• During the fourth quarter of 2018, the production and bridge estimates for this indicator were similar

(9.2% and 9.1%, respectively). This result suggests (p = 0.91) that a questionnaire design effect is

unlikely.

• The 2019 full-year estimate with the old weight (9.2%) was lower than, but not significantly different

from, the 2019 full-year estimate with the new weight (9.7%). This result (p = 0.24) suggests that the

weighting adjustment was unlikely to affect estimates for this indicator.

• Considered together, estimates for 2019 may have shifted upward by 0.4 percentage points due to the

methodological change. The shift due to methodological changes may account for the observed increase

from 9.2% in 2018 to 9.7% in 2019.

• There has been no clear trend in this indicator since 2015 (see Figure).

• There was no significant change from January-June 2019 (9.5%) to July-December 2019 (9.8%, p = 0.55).

This is consistent with the absence of a trend since 2015.

Preliminary Evaluation of the Impact of 2019 NHIS Questionnaire Redesign and Weighting Adjustments Page | 31

U.S. Department of Health and Human Services ● Centers for Disease Control and Prevention ● National Center for Health Statistics