A Guide for

Large Language Model

Make-or-Buy Strategies:

Business and Technical

Insights

August 2023

appliedAI

2

Contents

Contents 2

Executive Summary 4

1. Introduction 6

2. To Make or To Buy: Leveraging Large Language Models in Business 8

2.1. Getting Prepared for Large Language Model Make-or-Buy Decisions 8

2.1.1. Understanding the Large Language Model Tech Stack 8

2.1.2.Understanding Key Factors in Large Language Model Make-or-Buy Decisions 9

2.1.3. Understanding (Dis-)advantages of Open- vs. Closed-source Large Language

Models 12

2.1.4. Understanding (Dis-)advantages of Fine-tuning vs. Pre-training Models from

Scratch 13

2.2. Approaches for Large Language Model Make-or-Buy Decisions 15

3. Critical Techniques and Trends in the Field of Large Language

Models: From Landscape to Domain-specic Applications 18

LLM Strategy Guide

3

3.1. Navigating the Landscape of Large Language Models in the Generative AI Era 18

3.1.1. Key Techniques, Architectures, and Types of Data 18

3.1.2, Major Closed-source Models and Open-source Alternatives 26

3.1.3. Flourishing Large Language Model Applications, Extensions, and Relevant

Frameworks 31

3.2. Domain-Specic Application of Large Language Models in Industrial Scenarios 33

3.2.1. Fine-tuning and Adaptation from a Technical Perspective:

To What Extent Are They Needed and How Could They Help? 33

3.2.2. Towards Domain-specic Dynamic Benchmarking Approaches 35

References 38

Authors 44

Contributors 45

About appliedAI Initiative GmbH 46

Acknowledgement 47

appliedAI

4

Executive Summary

Key Business Highlights:

Rational Approaches to Large Language Model Make-or-Buy

Decisions

F

irms that employ large language models

(LLMs) can create signicant value

and achieve sustainable competitive

advantage. However, the decision of whether

to make-or-buy LLMs is a complex one and

should be informed by consideration of

strategic value, customization, intellectual

property, security, costs, talent, legal

expertise, data, and trustworthiness. It is also

necessary to thoroughly evaluate available

open-source and closed-source LLM options,

and to understand the advantages and

disadvantages of ne-tuning existing models

versus pre-training models from scratch.

D

epending on the strategic value and

the degree of customization needed,

rms have six possible approaches to

consider when making LLM make-or-buy

decisions:

1) Buy end-to-end application without LLM

controllability

2) Buy an application with limitedly

controllable LLM – Procure the application

including LLM as a component with some

transparency and control

3) Make application, buy controllable LLM –

Internal development of application on

top of procured LLMs controllable via APIs

4) Make application, ne-tune LLM – Internal

development of application and ne-

tuning of LLM based on procured or open-

source pre-trained LLMs

5) Make application, pre-train LLM – Internal

development of application and pre-

training of LLM from scratch

6) Stop

LLM Strategy Guide

5

Key Technical Highlights:

Future-shaping Trends for Informed Make-or-Buy Decisions

B

eyond fundamental LLM techniques

such as the transformer model

architecture, pre-training, and

instruction tuning, there are important

emerging trends that will further enhance

LLM performance and adaptability in

widespread domain-specic tasks. These

include the development of more efcient

model architectures and dataset designs,

integration of memory mechanisms inspired

by cognitive science, incorporation of

multimodality, enhancements in factuality,

and improved reasoning capabilities for

autonomous task completion.

N

ew possibilities to strike balances

between open- and closed-source

models, and between large and

small language models, present promising

opportunities. A growing open-source

ecosystem is helping organizations to

optimize costs and achieve the best

outcomes by leveraging the strengths

of each type of model. Likewise, smaller

language models have demonstrated

efcacy in specic tasks, challenging the

notion that bigger models are always

superior. Embracing this diverse range of

models can promote more efcient and

effective language model implementation.

G

aining a comprehensive

understanding of these trends

is vital for rms wanting to make

well-informed decisions and avoid

misconceptions about LLMs when planning

long-term budgets and infrastructure design.

appliedAI

6

1. Introduction

At the start of this decade, the concept

of generative AI was known only to a few

enthusiasts and visionaries. Yet in just a few

years, it has become increasingly evident that

generative AI, and particularly techniques

related to Large Language Models (LLMs),

are to be a game-changer for individuals,

businesses, and wider society.

Generative AI and the latest class of

generative AI systems, driven by LLMs such

as GPT-4, PaLM-2, and Llama 2, are capable

of creating original content by learning from

vast datasets. These ‘foundation models’

generalize knowledge from massive amounts

of data and can be customized for a wide

range of use cases. Some use cases require

minimal ne-tuning and a lower volume of

data, while others can be solved by providing

just a task instruction with no examples

(termed zero-shot learning) or a small

number of examples (few-shot learning).

These opportunities are empowering

developers to build AI applications that were

previously impossible and which have the

potential to transform industries.

The signicance of generative AI and

LLMs cannot be overstated. By enabling

the automation of many tasks that could

previously only be performed by humans,

generative AI will signicantly increase

efciency and productivity across entire

value chains and corporate functions,

reducing costs and opening up new and

exciting opportunities for growth. A study

by McKinsey, for example, estimates that

generative AI could add between $2.6

trillion and $4.4 trillion of value to the global

economy annually and automate work

activities that currently account for 60-70% of

employees’ time

1

. Firms that do not embrace

AI are at risk of falling behind.

1 McKinsey and Company (2023). The economic potential of generative AI: The next productivity frontier. https://

www.mckinsey.com/capabilities/mckinsey-digital/our-insights/The-economic-potential-of-generative-AI-The-

next-productivity-frontier#business-and-society

With the disruptive and extremely fast-paced

acceleration of AI advancement, executives

are confronted with some pressing

questions: What value do generative AI, and

in particular LLMs, have for my business?

How can I utilize the benets of LLMs? What

are the risks of embedding LLMs into my

organization? And what are LLMs, anyway?

Indeed, it is becoming vital to understand

how to effectively leverage this technology

in products, services, corporate functions

and processes, and how to apply LLMs to use

cases where signicant added value can be

achieved.

This white paper seeks to guide readers

on how to navigate this new era of LLMs,

enabling rms to make rational, informed

decisions and achieve sustainable

competitive advantage. It is essential to

understand both the business and technical

aspects of incorporating LLMs into your

organization. As such, we here address both

aspects by rst discussing make-or-buy

decisions around the application of LLMs

from a business perspective, followed by an

overview of critical technical topics, including

the latest trends in the eld and domain-

specic industrial applications of LLMs.

Whatever your company's stage of AI

maturity, now is the time to leverage LLMs

and drive innovation further.

LLM Strategy Guide

7

Glossary

Generative AI

A eld of articial intelligence that

focuses on creating models capable of

generating novel content, such as text,

code, images, or music, that resembles

human-created content.

Foundation Model

A large neural network model that

captures and generalizes knowledge

from massive data. A starting point

for further customization and a

fundamental building block for specic

downstream tasks.

Large Language Model (LLM)

A powerful neural network algorithm

designed to understand and generate

human-like language, typically trained

on a vast amount of text data and

considered a type of foundation model.

[See later Info Box ‘Large language

models as foundation models’].

Transformers

A type of neural network architecture

that has revolutionized natural language

processing tasks by efciently capturing

long-range dependencies in sequential

data such as sentences or paragraphs,

making it a suitable building block for

large language models.

Pre-training

The initial phase of training a neural

network model. The model learns from

a large dataset, allowing it to capture

general knowledge and patterns.

Fine-tuning

The process of adapting a pre-trained

neural network model to perform

specic tasks by training it on task-

specic data. This allows the model to

specialize its knowledge and improve its

performance on specic applications.

Few-shot learning

A technique whereby an AI model learns

to perform a new task with a small

number of examples, making it possible

to teach the model something new

without needing much training data.

Zero-shot learning

A technique whereby an AI model can

understand and perform a task with

no specic examples or training on

that task, relying instead on general

knowledge it has learned from related

tasks.

Q

How do you view the impact of the recent trend of generative

AI?

A

“Strategically, this has changed the way we work and what our

focus areas are. The output quality and ease of use will shape

both our professional and our private lives.”

- Dr. Andreas Liebl, Managing Director and Founder, appliedAI Initiative GmbH

appliedAI

8

2. To Make or To Buy:

Leveraging

Large Language Models

in Business

Effectively utilizing LLMs in business requires

consideration of several factors that will

affect decisions to either leverage external

closed-source models via APIs, develop

LLMs in-house, or take some form of

intermediary approach. There is no clear-

cut answer to how to make these decisions

but a systematic approach requires taking

into account LLMs and their applications

and informing make-or-buy decisions

by expanding from a sole application

perspective to one that encompasses LLMs.

To achieve this, the rst step is to assess

which capabilities and internal resources

are available and, in turn, which tech stack

should be addressed. The LLM tech stack is

generally understood to consist of four layers

as presented in Figure 1.

The bottom layer is the infrastructure

required (such as necessary hardware or

cloud platforms). This includes the systems

and processes needed to develop, train,

and run LLMs, such as high-performance

computation (HPC) optimized for AI and

Deep Learning. Anticipated use cases

and their scalability inuence the overall

infrastructure decision.

The second layer is the data volume and

quality required. The amount of data needed

strongly depends on approaches to use and

customization of LLMs (e.g., pre-training vs.

ne-tuning), so data quality and data curation

are always crucial for LLM success. Firms can

invest in data curation and preprocessing

techniques such as data cleaning,

normalization, and augmentation, to enhance

data quality and consistency. Implementing

rigorous quality control measures during the

data collection and labeling process can also

improve data reliability.

1. Infrastructure

2. Data

3. LLM

4.

LLM

Application

Figure 1. The tech stack for large language models

2.1. Getting Prepared for Large Language Model Make-or-Buy

Decisions

2.1.1. Understanding the Large Language Model Tech Stack

LLM Strategy Guide

9

Besides the LLM tech stack, there are other

factors that should considered in make-

or-buy decisions for LLMs, including the

following:

1) Strategic value. Ensuring that the

deployment of LLMs is in line with the

overall corporate strategy is of utmost

importance in make-or-buy decisions.

The main reason for developing an

LLM in-house is that it can provide high

strategic value with high scalability and

value creation, enabling a rm to achieve

sustainable competitive advantage. By

building LLMs internally, organizations

can establish and maintain proprietary

knowledge and in-house expertise,

creating an intellectual asset. This

intellectual property can contribute

to long-term competitive advantage

as it becomes increasingly difcult for

competitors to replicate or imitate.

Competitive advantage can also be

achieved through LLM ne-tuning,

depending on the quality and value of the

training data. As ne-tuning approaches

are relatively inexpensive, this presents

a promising value-creation opportunity

for rms with data assets. In contrast,

when LLMs are developed and trained

externally, they are available to a wider

market and available to competitors,

meaning no sustainable competitive

advantage can be achieved. Moreover,

having in-house LLM development

capabilities fosters innovation and a

culture of continuous learning in that it

enables rms to stay at the forefront of

technological advancements.

2) Customization. Developing LLMs in-house

typically allows for greater customization,

meaning that LLMs can be tailored to

requirements and rm-specic use

cases. This point mostly holds for ne-

training models with unique internal

data. In comparison to off-the-shelf

products, customized LLMs allow for

greater exibility while also maintaining

full ownership (cf. Chapter 3.2 “Domain-

Specic Application of Large Language

Models in Industrial Scenarios” for more

technical information). While using

external non-customized LLMs will mean

lower costs, it is important to note that

potentially sensitive data must be shared

with the external partner.

3) Intellectual property (IP). LLMs, especially

those sourced from the external market,

are trained on extensive datasets that

may include copyrighted materials or

proprietary information. As a result, there

may be concerns regarding ownership

and usage rights of generated content.

Firms must therefore establish clear

policies and agreements that address

IP rights concerning LLM-generated

2.1.2. Understanding Key Factors in Large Language Model Make-or-Buy

Decisions

On the third layer is the LLM, which will

eventually form the basis for idiosyncratic

applications. LLMs can be open- or closed-

source (cf. Chapter 2.1.3. and Chapter

3.1.2.). Firms should aim to create synergies

between value-adding use cases as part of a

systematic make-or-buy strategy.

The fourth and top layer is LLM applications.

These applications can either build upon

end-to-end applications or rely on an

external third-party API. The make-or-buy

decision for the application layer depends on

the specics of the lower layers. For example,

if a rm lacks high-quality data, then “make” is

unlikely to be a feasible option here.

appliedAI

10

content. These policies should outline

ownership of content, licensing or usage

restrictions, and provisions for protecting

sensitive information. Collaborative efforts

involving third parties should ensure

that these issues are considered during

contracting. It should be noted, however,

that there is still a great deal of uncertainty

around IP rights stemming from content

created through generative AI.

4) Security. LLMs can require the

processing of extremely sensitive

business information. Firms should

conduct a thorough risk assessment

for each use case to ex-ante identify

and address potential security issues.

For highly sensitive data it is typically

recommended to host the LLM within a

rm insular network. If this is not possible,

collaborating with reputable external LLM

providers who adhere to stringent security

standards and are transparent about

their security practices is crucial. For data

falling under the GDPR, rms must ensure

that all data is stored and processed on

servers within Europe.

5) Costs. Developing LLMs in-house is a

costly endeavor. It rst requires signicant

investment in terms of hiring a highly-

skilled workforce, including ML engineers

and NLP specialists, who tend to

command high salaries. The development

process itself is then time-consuming and

resource-intensive, involving extensive

research, data collection, model training,

and iterative improvement cycles,

all of which demand considerable

computing power and infrastructure

investment. Ongoing maintenance,

updates, licenses, and support require

continuous investment to ensure optimal

performance and reliability. Last, it is

important to consider the opportunity

costs of allocating internal resources to

LLM development over core business

activities. While in-house development

offers several benets, it diverts attention

and resources from other strategic

initiatives and potentially delays time-

to-market, which can lead to increased

opportunity costs. Executives should

therefore carefully evaluate nancial

implications and weigh costs against

potential benets before deciding to

develop LLMs in-house. Fine-tuning may

be a more suitable approach in many

cases, with substantially lower costs.

To address high development costs,

organizations could explore ways to

streamline the labeling and development

cycles. Leveraging pre-existing labeled

datasets or partnering with external

data providers can reduce the need for

extensive manual labeling, saving time

and resources. Additionally, adopting

cloud-based solutions for data storage

and processing can offer scalability and

cost-efciency, enabling organizations

to handle large volumes of data more

effectively.

6) Talent. The scarcity of experienced

professionals in elds such as data

science, ML, and NLP often make it

difcult to establish a skilled in-house

team, especially for SMEs confronted

with resource constraints. In Europe,

the competition for top talent is erce,

with SMEs and large rms alike facing

recruitment difculties and talent

shortage. Additionally, extremely

rapid development in the eld of LLMs

necessitates continuous learning and

professional development, meaning

companies should make signicant

investments in training and upskilling their

workforce. Overcoming these hurdles

requires a strategic approach that can

include fostering partnerships with

academic institutions, collaborating with

external partners, offering competitive

salaries, and creating a stimulating work

environment that promotes innovation.

Firms already confronted with talent

scarcity may decide to source their LLM

solutions from the market to save direct

and indirect talent-related costs and to

utilize their talent resources for other

projects. In-house ne-tuning models

often constitute a middle course that

can strike a balance between acquiring

off-the-shelf products and developing

models from scratch.

7) Legal expertise. Developing LLMs in-house

requires rms to seek legal expertise

to navigate an increasingly complex

regulatory landscape. For instance,

the proposed EU AI Act, which focuses

on preventing harm to health, safety,

and fundamental human rights, would

involve a risk-based approach whereby AI

systems would be assigned to a risk class.

High-risk systems such as LLMs would

need to meet stricter requirements than

low-risk systems. Firms pursuing in-house

LLM Strategy Guide

11

development of LLMs must ensure they

follow all regulatory requirements and

thus obtain increasingly complex legal

expertise. If this is not available in-house,

or if rms want to reduce their general

liability, they may instead decide to buy

an LLM from the market and ensure the

provider is fully liable, i.e., that the specic

use case is in line with applicable laws and

regulations. Additionally, by considering

risk classication early in the decision-

making process and making timely

decisions, rms can avoid unnecessary

expenditures and undesired legal

consequences.

8) Data. Data is of utmost importance for LLM

performance. LLMs rely on vast amounts

of diverse data to understand language

patterns, enhance accuracy, and generate

coherent and appropriate responses.

However, biases inherent to the data

can pose challenges. For example, LLMs

might inadvertently learn and perpetuate

biases present in training data. Efforts

are being made to identify and mitigate

such biases. Diverse and inclusive training

data is crucial to ensure fairness and

reduce perpetuation or amplication of

existing biases, and regular monitoring

and user feedback are vital for detecting

and rectifying biases. By evaluating LLM

outputs and actively seeking user input,

developers can improve systems’ fairness

and mitigate biases. Data is equally

important for the process of ne-tuning

LLMs. By ne-tuning with domain-specic

data, LLMs can acquire specialized

knowledge and language patterns related

to the target task, enabling them to

generate responses that align with the

specic requirements of the use case.

Moreover, ne-tuning also helps address

biases and improve fairness in LLM

responses. By ne-tuning with datasets

that are explicitly designed to be diverse,

inclusive, and representative, developers

can reduce biases and ensure that the

LLM performs more equitably.

Q

What, in your opinion, is the most critical challenge or risk that

the European industry needs to address when adopting LLMs

for practical use cases?

A

“Among the most critical challenges for the industry when

adopting LLMs is the alignment with existing and upcoming

regulations, such as the EU AI Act. At the same time, this challenge is

also an opportunity to honor our customers' trust in their data with

our own standards and approach, and to get them on board with

the change. This alignment includes meeting data management

requirements, model evaluation, testing, monitoring, disclosure

of computational and energy requirements, and downstream

documentation. In terms of data privacy, companies from Europe

need to be cautious about sharing sensitive data with LLMs hosted

by foreign entities and comply with GDPR regulations. To address this

challenge, potential mitigation measures include developing robust

data anonymization techniques, implementing secure and private

computing methods, encouraging local LLM development to reduce

reliance on foreign models, and working with regulators to establish

clear guidelines and frameworks for the responsible use of AI.”

- Dr. Stephan Meyer, Head of Articial Intelligence, Munich Re Group

appliedAI

12

Make-or-buy decisions regarding LLMs

require thorough evaluation of available

options, which include open-source and

closed-source LLMs. Generally, the current

market environment is dominated by closed-

source, API-based LLMs, yet there is an ever-

growing number of open-source options. The

gure below provides an overview of notable

open- and closed-source LLMs released

between 2019 and June 2023 [1].

As Figure 2 shows, there is a wide range of

options for open-source and closed-source

LLMs

1

. Available open-source options tend to

allow for greater transparency and auditability

over their proprietary counterparts. With

open-source models, researchers and

developers can access the underlying

code, model architecture, and training data,

such that they can understand the inner

workings of the model and identify potential

biases or ethical concerns. Indeed, whereas

transparency is a crucial aspect of open-

source LLMs, closed-source LLMs are most

often a black box with opaque underlying

functioning. When a model's code and data

are made openly available, developers can

scrutinize and verify its behavior, ensuring

it aligns with desired ethical standards.

1 See also Chapter 3.1. for a more comprehensive analysis as well as detailed lists of available options from a

technical perspective, in particular for the trend of maximizing the benets by incorporating both large closed-

source LLMs and a combination of large and small, specialized open-source LLMs.

This transparency can also help to address

concerns about algorithmic biases and

discriminatory outputs. Researchers and

the wider community can work together to

identify and rectify these issues, leading to

fairer, more trustworthy language models.

Several prominent open-source LLM

initiatives have emerged, each making

signicant contributions to the eld. As well as

early versions of OpenAI's GPT (Generative

Pre-trained Transformer), an inuential

open-source LLM initiative is Hugging Face's

Transformers library, which provides a

comprehensive set of pre-trained models

including various architectures such as GPT,

BERT, and RoBERTa. The library also offers

tools and utilities for training, ne-tuning,

and deploying models, making it easier for

developers to leverage the power of LLMs

in their applications. The Transformers

library has gained widespread popularity

due to its user-friendly interface, extensive

documentation, and support from a vibrant

community. Several other open-source LLM

projects and libraries exist, such as Fairseq,

Tensor2Tensor, and AllenNLP.

2.1.3. Understanding (Dis-)advantages of Open- vs. Closed-source Large

Language Models

9) Trustworthiness. Trustworthiness is of

paramount importance when employing

LLMs. In-house development of LLMs

allows rms to have full control over the

entire process, enabling them to build

LLMs in line with their values and ethical

considerations. This control fosters

trustworthiness by ensuring that LLMs

are aligned with rms’ mission and

vision. Moreover, in-house development

enables transparency and explainability.

Firms can document and communicate

development methodologies, data

sources, and training processes, allowing

users to better understand and evaluate

LLM outputs. By mitigating biases and

ensuring fairness, rms can build trust

among users, assuring them that the

LLMs provide accurate and unbiased

information. Alternatively, when buying

LLMs from the market, especially from

established suppliers, rms may benet

from the fact that the acquired LLM has

undergone rigorous testing, evaluation,

and compliance checks to ensure it

meets industry standards and regulatory

requirements. Again, the ne-tuning of

models often constitutes a compromise

between trustworthiness and effort.

Together, these factors should be viewed

holistically and acted on as such, rather than

being addressed in isolation.

LLM Strategy Guide

13

Figure 2. Open-source and closed-source large language models with over 10 billion parameters

released between 2019 and June 2023 [1]

In turn, closed-source LLMs often leverage

signicant computational resources and

proprietary datasets during their training,

allowing them to perform at extremely

high levels on a range of language tasks.

The investment in infrastructure and data

acquisition made by companies can result in

LLMs that surpass the capabilities of open-

source models. However, rms are especially

concerned about data protection and

information security when closed-source

LLMs are running as software as a service

(API-based model), an approach increasingly

used by vendors. Customization of closed-

source models means that rms need to

transfer their often highly sensitive data to

the vendor for ne-tuning.

2.1.4. Understanding (Dis-)advantages of Fine-tuning vs. Pre-training Models

from Scratch

Another critical aspect in make-or-buy

decisions regarding LLMs relates to an in-

depth understanding of the advantages and

disadvantages of ne-tuning existing models

versus pre-training models from scratch,

specically considered from a business

perspective.

Fine-tuning pre-trained LLMs generally

incurs signicantly lower costs compared

to building them from scratch. Depending

on the underlying data structure and

volume, ne-tuning costs can be relatively

low, ranging from a few hundred to a few

thousand US dollars. In ne-tuning, a pre-

trained model is already available, eliminating

the need for resource-intensive pre-training

on vast amounts of data and large amounts

of computational power. This translates to

signicant savings in resources, time, and

electricity consumption.

Conversely, pre-training LLMs from scratch

involves substantial costs at various stages

of the process which combined can reach

millions of dollars. For example, the training

costs for OpenAI’s GPT-3 are estimated

to be $5 million, while models with more

appliedAI

14

training parameters are estimated to

exceed these costs. Pre-training LLMs from

scratch demands an enormous amount of

computational power, specialized hardware,

and extensive infrastructure, all of which add

heavy costs. Another consideration is that the

pre-training process can take weeks or even

months to complete, adding to the costs of

computational resources and electricity.

There are also notable differences in data

acquisition and annotation costs. Fine-

tuning LLMs typically requires a smaller

labeled dataset for the target task, which

can be less expensive to obtain, annotate,

and curate than the comprehensive and

diverse datasets required for pre-training

an LLM from scratch. The costs of acquiring

and labeling a large-scale dataset can be

substantial, and manipulation of such assets

requires substantial domain expertise and

signicant human effort.

Overall, then, there are usually cost

advantages to ne-tuning LLMs compared

to pre-training them from scratch. However,

it is essential to consider the specic

requirements of each use case, including

the scale of the target task, availability

of data, and potential risks, to determine

the most appropriate approach based on

available resources and objectives. Ultimately,

decisions about this question will depend on

the business cases and nancial resources

a rm is willing to invest. See also Chapter

3.2. Domain-Specic Application of Large

Language Models in Industrial Scenarios

for relevant discussions from a technical

perspective.

LLM Strategy Guide

15

2.2. Approaches for Large Language Model Make-or-Buy Decisions

After acknowledging the LLM tech stack

and relevant key factors and business

considerations, there are six generic

approaches that rms can follow when

making LLM make-or-buy decisions:

1) Buy end-to-end application without LLM

controllability

When evaluating use cases of low

strategic value and limited customization

requirements for both the application

and the LLM, acquiring a pre-built

end-to-end application is typically the

most convenient solution, with the LLM

operating merely as a hidden component.

Given the highly tailored nature of the LLM

to the application and its scope, explicit

customization and controllability are

unnecessary and likely not allowed by the

vendor.

2) Buy an application with limitedly

controllable LLM – Procure the application

including the LLM as a component with

some transparency and control

This approach of procuring an application

along with controllable LLMs applies to use

cases that demand minimal adjustments

or can be deployed immediately. It is

worth noting that in scenarios where

customization needs are low, it may be

less necessary to control the underlying

LLM and companies might instead focus

on adapting only the user layer to meet

their requirements. Nevertheless, case-

specic requirements concerning the

degree of customization, regulation, data

security/secrecy, intellectual property

(IP) concerns, and overall performance

should be carefully considered. Another

point of attention is the reusability of an

LLM across applications in the company

and how this might produce undesired

dependencies and vendor-locking

scenarios. This approach is only feasible in

cases of low data condentiality allowing

transfer to external providers.

3) Make application, buy controllable LLM

– Internal development of application on

top of procured LLMs via APIs, e.g., Azure

OpenAI Services

An alternative to the above approach

is to focus exclusively on the internal

development of the application while

sourcing and integrating externally

sourced pre-trained or ne-tuned LLMs.

This approach is particularly suitable

for use cases that demand medium to

high levels of LLM customization and

is especially relevant when internal

resources such as computing power,

capacity, or skills are not sufciently

available. Additionally, budget constraints

can also drive the decision to adopt this

strategy. However, as with approach 2,

considerations regarding customization,

regulation, data security/secrecy, and IP,

as well as overall performance and model

reusability, need to be carefully taken into

account, and vendors should be carefully

scrutinized.

4) Make application, ne-tune LLM – Internal

development of application and ne-tuning

of LLM based on procured or open-source

pre-trained LLMs

This approach involves utilizing existing

pre-trained LLM models, along with

specic ne-tuning frameworks or

services, and combining them with

internal development efforts to build

applications and ne-tune models using

internal data for targeted use cases. The

quantity and quality of open-source pre-

trained LLMs are continuously rising, but

the licenses of these pre-trained models

can impose signicant limitations on

their commercial use. For ne-tuning,

several providers such as AWS, Google,

NVIDIA, H2O, and others already offer such

services, and various open-source ne-

tuning services are already available. The

level of internal development required

depends on both the sophistication of the

ne-tuning components and the quality

of the underlying pre-trained LLM, as

well as the availability of in-house data.

While ne-tuning models is comparatively

inexpensive, data quality is often a major

bottleneck. Nevertheless, this approach

offers a viable option for achieving

sufcient customization and quality of

LLMs, while maintaining control over

internal data processing and LLM hosting.

This can become particularly important

in certain use cases, ensuring sustainable

competitive advantage.

appliedAI

16

5) Make application, pre-train LLM – Internal

development of application and pre-

training of LLM from scratch

This approach involves full end-to-end

development (“make”), building the

application itself as well as pre-training

LLMs in-house from scratch. The broader

the applicability of an LLM and the greater

the value it can generate, the better it

is to pursue the "make" approach. This

option is also advisable in highly sensitive

use cases where relying on externally

sourced models is not an option. Although

very costly, developing LLMs from scratch

might be the best option for achieving

optimal customization and quality, and

for ensuring a sustainable competitive

advantage.

6) Stop

If the use case holds limited strategic

value, it is advisable to assign resources to

use cases of higher strategic signicance.

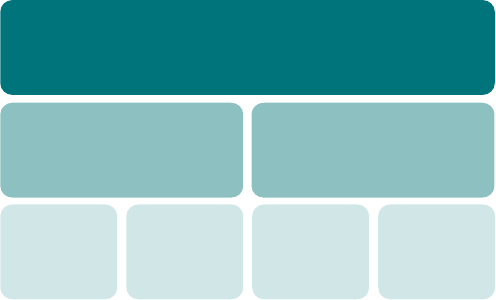

Figure 3 provides a guide of which

approach to use, organized by level of

strategic value of an application and the

degree of customization needed.

Q

What are your thoughts on the potential impact of large

language models in the semiconductor industry, and how do

you see that affecting your company?

A

“In the semiconductor industry there are main value potentials:

improving our processes and creating customer vue. One

area where this potential can be realised is in knowledge retrieval

throughout research and development and manufacturing

processes, leading to enhanced speed and stability, for example in

the case of equipment maintenance. This reduces our dependency

on specic experts with the right domain knowledge being present

24/7 to solve critical issues and helps us train new experts faster.

Moreover, by providing top-notch customer support for our highly

technical products, we can deliver a better customer experience

while increasing the scalability associated with such service.

Additionally, there is signicant room for improving productivity in

support functions, ranging from generating product documentation

to marketing and beyond -- lots of potential.”

- Simon-Pierre Genot, Senior Manager AI Strategy, Inneon Technologies

LLM Strategy Guide

17

Strategic Value

Degree of Customization

Buy an application with limitedly

controllable LLM

(Procure the application including LLM as a

component with some transparency and control)

Buy end-to-end application without

LLM controllability

Buying a ready-to-use application can be the most

convenient solution, depending on the use case,

with the LLM serving as a concealed component.

Stop

Given the application's limited value, it is advisable to

allocate resources to projects of greater strategic

significance.

Make application, fine-tune LLM

(Internal development of application and fine-tuning

of LLM based on procured or open-source

pre-trained LLMs)

PROS

CONS

• Immediate time to market

• No requirement of in house data

• Usual delivery models (SaaS vs. On-Prem) give

flexibility on in-house compute requirements

and pricing

• Low requirement of in house expertise

• Black-box LLM with limited transparency,

controllability

• No reusability of the LLM outside of application

• Any customization of the LLM by vendor

• Necessity to share sensitive data and

information for customization

• Vendor lock-in effect

• Risk of price changes

PROS

CONS

• Protection of sensitive data and IP

• Clear cost calculation / no model vendor lock-in

effect

• High transparency and control of robustness

• Increased time to market

• Potentially high compute power

• Requires moderate in-house AI expertise

• Requires moderate amount of training data

Make application, buy controllable LLM

(Internal development of application on top of procured LLMs controllable via

APIs, e.g., Azure OpenAI Services)

PROS

CONS

• Reduced time to market

• No to very little requirement of in-house

data and computation power

• Requires at least some in-house expertise

• Most generalized are commercial LLMs

• Black-box LLM with low transparency and

controllability

• Advanced customization (i.e. fine-tuning

or pre-training) by vendor only

• Necessity to share sensitive data for

customization

• Vendor lock-in effect

• Risk of price changes by vendors

Make application, pre-train LLM

(Internal development of application and pretraining

of LLM from scratch)

PROS

CONS

• Protection of sensitive data and IP

• Full transparency and control of the model

• Transparent costs

• No model vendor lock-in effect

• Very high development costs

• Long time to market

• Requires significant in-house AI expertise

• Requires compute power necessary

• Requires large amount of training data

LOW

MEDIUM

HIGH

LOW

MEDIUM

HIGH

Figure 3. Pros and cons of in-house LLM application development (“make or buy”)

appliedAI

18

3. Critical Techniques

and Trends in the Field of

Large Language Models:

From Landscape

to Domain-specic

Applications

3.1. Navigating the Landscape of Large Language Models in the

Generative AI Era

3.1.1. Key Techniques, Architectures, and Types of Data

LLMs are an integral part of the generative

AI era. They are complex systems that

can process natural language input and

generate human-like responses. Navigating

the landscape of LLMs in this era requires

an understanding of key techniques as

well as the types of data used in these

models. In this section, we will discuss some

of the fundamental aspects of LLMs that

enable them to function seamlessly, before

describing some key trends observed in this

fast-developing eld.

LLM Strategy Guide

19

The Fundamentals

Transformer as the Base Architecture that

Handles Contextual Meanings

One of the most popular techniques

used in LLMs is the transformer model

architecture, introduced by Vaswani et al. in

2017 [2]. Transformers are neural networks

that can process sequences of data such

as text while being able to handle long-

range dependencies and understand

context. They do this by implementing an

‘attention’ mechanism that allows the model

to process an entire input sequence all at

once and capture the relative importance

of each input token to every other token

in the context. This enables the LLM to

understand the complicated relationships

between words, phrases, etc., even when

they are far apart in the input sequence.

Furthermore, the transformer architecture

offers a key advantage over previous

recurrent neural network models in that it is

highly parallelizable, facilitating large-scale

training on distributed hardware. The basic

transformer architecture has been used or

adapted in some of the most powerful and

popular LLMs, such as GPT-3, T5, and BERT.

Pre-training as a Key Procedure to Equip the

Model with Fundamental Knowledge

Another key technique used in LLMs is pre-

training, which involves training a model on a

large corpus of text data before ne-tuning

it on a specic task. This technique has been

shown to improve the performance of LLMs

on a variety of downstream tasks such as

translating languages, answering questions,

and generating text. Pre-training can be

conducted using a variety of objectives

including language modeling, where the

model is trained to predict the next word in a

sequence, and masked language modeling,

where some of the input tokens are masked

and the model must predict their original

values.

Instruction Tuning & RLHF: Aligning with Human

Preference

Instruction tuning is a fundamental concept

in training LLMs. Early work focused on ne-

tuning LLMs on various publicly available NLP

datasets and evaluating their performance

on different NLP tasks. More recent work,

such as OpenAI's InstructGPT, has been

built on human-created instructions and

demonstrates success in processing diverse

user instructions [3] Subsequent works like

Alpaca and Vicuna have explored open-

domain instruction ne-tuning using open-

source LLMs. Alpaca, for example, used a

dataset of 50k instructions, while Vicuna

leveraged 70k user-shared conversations

from ShareGPT.com. These efforts have

advanced instruction tuning and its

applicability in real-world settings.

Another technique, Reinforcement Learning

from Human Feedback (RLHF), aims to

use methods from reinforcement learning

to optimize language models with human

feedback [4]. Its core training process

involves pre-training a language model,

training a reward model, and ne-tuning the

language model with reinforcement learning.

The reward model is calibrated with human

preferences and generates a scalar reward

that represents these preferences. While

RLHF is promising, to date it has notable

limitations such as the potential for models to

output factually inaccurate text.

Types of Data

LLMs are typically trained on extensive

datasets primarily composed of textual

material from web pages, books, and social

media. However, as will be explained in a

later section, they can also utilize data from

other sources as long as it can be converted

to a sequence of tokens with a known set of

‘vocabulary’. Hence LaTeX formulas, musical

notes, and programming languages like

appliedAI

20

Python, Java, and C++ may all be adopted as

training data [5]-[7]. This enables the model

to generate novel mathematical or physical

formulas, reason with them, compose

music, and generate code to address bugs

and enhance program efciency, thereby

streamlining the development process.

Additionally, LLMs can leverage SMILE or

SELFIES chemical structures for drug design,

DNA or protein sequences for predicting

protein structures, or genetic mutations

related to diseases [8]-[11].

The scope extends further to encompass

various other modalities like audio, video,

signal data (such as wireless network

signals or depth sensing signals) [12]-[16],

relational or graph database data (such as

stock prices or knowledge graphs) [17],

[18], as well as digital signatures and le

bytes (such as blockchain transactions or

image le bytes) [19],[20]. This huge range

of usable data sources allows the models to

perform tasks such as speech recognition,

action recognition, video summarization,

robotic movement planning, knowledge

graph completion, stock price prediction,

blockchain transaction, or wireless network

transmission anomaly detection, as well

as image classication. While training

models on diverse data types can pose

challenges related to pre-processing

and standardization, it offers signicant

benets as it can unlock new applications

and solutions across various domains. The

ability to process and generate sequential

data from multiple modalities expands the

potential impact and use cases of LLMs,

fostering innovation and problem-solving in

numerous elds (Figure 4).

Modality of data Source of data

Tex t

Image

Audio

Video

3D

Code

Genomics

Chemical

Structures

Webpages

(e.g. Wikipedia, Github

etc.)

Data base

(e.g. Financial data,

Virus, Drug)

Sensor Data

(e.g. Depth, Distance)

Books

Social Media

(e.g. Instagram, TikTok,

YouTube, Twitter etc.)

And More... And More...

Figure 4. Sample data modalities and data sources involved in recent large language models.

Note that both the types of data modalities and the types of data sources are continuously increasing.

LLM Strategy Guide

21

Large Language Models as Foundation Models

LLMs possess the remarkable ability to

generalize knowledge across diverse

contexts, aligning them closely with

the concept of foundation models

[21],[22]. Foundation models capture

relevant information as a versatile

"foundation" for various purposes,

distinguishing them from traditional

approaches. They demonstrate the

characteristic of emergence, with

behaviors implicitly induced rather than

explicitly constructed. LLMs excel in

solving diverse tasks that go beyond

their original language modeling

training [23],[24]. These tasks can

be accomplished just using natural

language prompts, without the need for

explicit training. This in-context learning

capability allows LLMs to perform

tasks such as machine translation,

arithmetic, code generation, answering

questions, and more [25],[26]. In a

zero-shot learning scenario, the model

relies solely on the task descriptions

given in the prompt [27]-[30], while in

a few-shot learning scenario, a small

number of correct answer samples are

incorporated into the prompts [31]-[33].

Meanwhile, the use of chain-of-thought

(CoT) prompting, which provides

step-by-step instructions to guide the

model's answer generation, has been

shown to boost the model's reasoning

capabilities and overall performance

[34]-[36]. These highlight the generality

and adaptability of LLMs as foundation

models.

Homogenization is another key

characteristic of foundation models and

refers to the unifying and consolidating

of methodologies across modeling

approaches, research elds, and

modalities [21]. For example, model

architectures such as BERT, RoBERTa,

GPT, and others have been adopted as

the base architecture for most state-

of-the-art NLP models. This trend

extends beyond the eld of natural

language processing, with similar

transformer-based approaches being

applied in diverse domains such as DNA

sequencing and chemical molecule

generation. In addition, based on similar

principles, foundation models may

be built across modalities. Multimodal

models, which combine data in the

form of texts, audio, images, etc., offer

a valuable fusion of information for

tasks spanning multiple modes. This

convergence of methodologies and

models has streamlined disparate

techniques, leveraging the power of

transformers as a core component.

Homogenization has facilitated cross-

eld research, enabling LLMs to excel

in diverse applications such as drug

discovery, robotic reasoning, and media

generation. Foundation models provide

a base of generalized knowledge that

transcends specic tasks and domains,

revolutionizing the generative AI

landscape.

To summarize, LLMs are powerful neural

network algorithms in the eld of natural

language processing. Key techniques used in

LLMs include transformer architecture, pre-

training, instruction tuning, and RLHF. LLMs are

trained on massive amounts of data gathered

from a huge range of sources and modalities.

As foundation models, they are procient at

generalizing knowledge from vast amounts of

text and showing zero- or few-shot learning

capabilities as well as impressive reasoning

skills, particularly when combined with

techniques like chain-of-thought prompting.

LLMs can accurately complete a wide range

of tasks including understanding language,

generating text, and handling diverse types

of sequences. Understanding the techniques,

architectures, and types of data used in LLMs

as well as their characteristics as foundation

models is essential for navigating the current

and future landscape of generative AI.

appliedAI

22

Beyond the Fundamentals: Key Trends That Shape the Future

In the ever-evolving realm of LLMs, several

key trends have emerged to resolve

previous inadequacies such as heavy costs,

hallucinations, and reasoning fallacies.

These limitations have posed considerable

challenges to the industrialization of

LLMs. Consequently, the research and

development related to these trends will play

a pivotal role in expanding LLM utilization.

The trends surpass foundational aspects and

provide fresh perspectives into the evolving

characteristics of LLMs, unlocking exciting

opportunities for exploration and innovation,

and laying the groundwork for future

advancements.

Efcient Model Architectural Design

A signicant recent advancement in LLM

research pertains to enhancing model

efciency. Efforts have been made to reduce

time and space complexities associated with

LLMs. One such innovation is Receptance

Weighted Key Value (RWKV), which optimizes

model architecture and resource utilization

without compromising performance [37].

Another notable trend relevant to model

architecture design regards techniques

that allow models to efciently handle

longer input sequences (e.g., LongNet [38],

Unlimiformer [39], mLongT5 [40]), thereby

enabling LLMs to process and understand

more comprehensive and context-rich

information at once.

Effective and Precise Dataset Creation

Another burgeoning area of focus is

the effective generation of training and

instruction tuning data, leveraging methods

such as WizardLM to evolve complex

instructions from simple ones, enhancing

the speed of data generation as well as

the diversity of the contents [41]. Other

approaches like MiniPile [42] or INGENIOUS

[43] aim to achieve competitive performance

with a small number of examples. Additionally,

the innovative approach of Domain

Reweighting with Minimax Optimization

(DoReMi) estimates the optimal proportion of

language from different domains in a dataset,

such that LLMs can better adapt to diverse

data sources and enhance their capacity for

generalization [44].

Reconsideration of Model Scaling Laws:

Bigger ≠ Better

The LLM eld has traditionally emphasized

a positive correlation between model

scale and performance improvement. Yet

recent studies challenge this notion by

presenting evidence of inverse scaling,

whereby increased model size leads to

worse task performance [45] (Figure 5). This

phenomenon arises due to factors including

undesirable patterns in the training data and

deviation from a pure next-word prediction

task. These ndings have sparked a shift in

understanding the behavior of larger-scale

models and have highlighted the need for

careful consideration of training objectives

and data selection. Relatedly, exploration

of smaller language models (SLMs) [46]–

[48] has demonstrated their efcacy in

specic tasks such as procedural planning

and domain-specic question-answering.

Approaches like PlaSma focus on equipping

SLMs with procedural knowledge and

counterfactual planning capabilities, enabling

them to rival or surpass the performance

of larger models [49]. Similarly, Dr. LLaMA

leverages LLMs to enhance SLMs through

generative data augmentation, yielding

improved performance in domain-specic

question answering tasks [50]. These

developments challenge the conventional

belief that bigger models are inherently

superior and highlight the importance of

carefully tailored data and objectives for

training language models. By adopting a

more nuanced understanding of model

scaling laws, researchers and practitioners

can harness the potential of smaller as

well as larger language models to meet

the demands of diverse applications and

domains.

Alternative Alignment Approaches

Another focus of current research is how best

to align LLMs with human preferences, with

the goal of improving model performance

and interaction quality. Traditional approaches

such as the aforementioned Reinforcement

Learning from Human Feedback (RLHF)

have relied on optimizing LLMs using reward

scores from a human-trained reward model

[3], [4]. These approaches have shown

effectiveness, but come with computational

complexity and heavy memory requirements.

Recent advancements introduce approaches

LLM Strategy Guide

23

Figure 5. Larger models may not necessarily perform better for tasks deviating from

next-word prediction. FLOPs correspond to the amount of computation consumed

during model pre-training, which correlates with model size as well as factors such

as training time or data quantity. Training FLOPs are used rather than model size

alone because computation is considered a better proxy for model performance

in the original paper[45].

such as Sequence Likelihood Calibration

with Human Feedback ([51]) and Reward

Ranking from Human Feedback (RRHF)

[52], which address earlier shortcomings by

calibrating a language model’s sequence

likelihood through ranking of desired versus

undesired outputs. Another method, termed

Less Is More for Alignment (LIMA) [53],

aims to achieve comparable performance

without reinforcement learning by more

efciently ne-tuning models on only 1,000

carefully curated prompts and responses.

These examples present a simpler and

more efcient approach to aligning LLM

output probabilities with human preferences,

facilitating integration of LLMs into practical

applications and enhancing their value.

Incorporation of Cognitively Inspired Memory

Mechanisms

Yet another emerging trend in this eld is

the incorporation of cognitively inspired

memory mechanisms into LLMs, which

takes inspiration from current understanding

of human memory functioning [54]–[56].

This development aims to improve training

efciency, generalization across tasks,

and long-term interaction capabilities.

For example, to address the forgetting

phenomenon, in which a model's

performance on previously completed

tasks deteriorates, researchers have

proposed Decision Transformers with

Memory (DT-Mem) which integrates an

internal working memory module into LLMs

[57]. By storing, blending, and retrieving

information for different tasks, this proposed

mechanism enhances training efciency

and generalization. Researchers are also

investigating deciencies of long-term

memory in LLMs, referring to models’

limited capacity to sustain interactions

over extended periods. One proposed

solution is MemoryBank, a novel memory

mechanism tailored for LLMs [58]. Inspired

by the Ebbinghaus Forgetting Curve theory,

MemoryBank enables LLMs to summon

relevant memories and continuously update

their memory based on time elapsed and

the signicance of the memory. By emulating

human memory storage mechanisms and

allowing for long-term memory retention,

LLMs could overcome the limitations of

forgetting and sustain meaningful longer-

term interactions.

Magnifying Multimodality

As described earlier, a clear trend in the

continuously evolving eld of LLMs is the

incorporation of more and more modalities

and the improvement of multimodal training

[14], [36], [59]–[61]. Researchers have

developed approaches like ImageBind, which

learns a joint embedding across multiple

appliedAI

24

modalities such as images, text, audio,

depth, thermal, and inertial measurement

unit data, making cross-modal retrieval,

composition, detection, and generation

possible [62]. ULIP-2, a multimodal pre-

training framework, addresses scalability

and comprehensiveness issues in gathering

multimodal data for 3D understanding by

leveraging LLMs to automatically generate

holistic language counterparts [63]. It has

achieved remarkable improvements in

zero-shot classication and real-world

benchmarks without manual annotation

efforts. Such advancements expand LLM

capabilities, enabling them to understand

and generate across multiple modalities and

perform complex tasks in diverse domains.

From Explainability to Tractability and

Controllability

Novel approaches have also been developed

to enhance the explainability, tractability,

and controllability of LLMs and relevant

applications [64]–[67]. For example, Control-

GPT leverages the precision of LLMs like

GPT-4 in generating code snippets for

text-to-image generation [68]. By querying

GPT-4 to write graph-generating codes and

using the generated sketches alongside

text instructions, Control-GPT enhances

instruction-following and greatly improves

the controllability of image generation.

Another approach, Backpacks, introduces

a neural architecture that combines strong

modeling performance with interpretability

and control [69]. Backpacks learn multiple

sense vectors for each word and represent a

word as a context-dependent combination

of sense vectors, allowing for interpretable

interventions to change the model's behavior.

Additionally, GeLaTo proposes using tractable

probabilistic models, such as distilled

hidden Markov models, to impose lexical

constraints in autoregressive text generation

[70]. GeLaTo achieves state-of-the-art

performance on constrained text generation

benchmarks, surpassing strong baselines.

Advances like these not only provide insights

into the workings of LLMs but also enable

greater control and customization, enhancing

their performance in computer vision and

text generation tasks.

Hallucination Fixes, Knowledge Augmentation,

Grounding, and Continual Learning

One of the most prominent trends in

recent research is the concerted effort to

tackle hallucination and factual inaccuracy,

two major stumbling blocks to LLM

industrialization [71]–[74]. Researchers have

pursued multiple approaches to tackle these

problems [75]–[86]. One approach involves

analyzing and mitigating self-contradictions

in LLM-generated text by designing

frameworks that constrain LLMs to generate

appropriate sentence pairs [87]. Another

aims to enhance the factual correctness

and veriability of LLMs by enabling them to

generate text with citations [88]. This involves

building benchmarks for citation evaluation

and developing metrics that correlate with

human judgment.

Additionally, researchers have introduced

frameworks that augment LLMs with

structured or graph knowledge bases

(‘grounding’) to improve factual correctness

and reduce hallucination. One approach,

Chain of Knowledge (CoK), incorporates

structured knowledge bases that provide

accurate facts and reduce hallucination

[89]. Another technique, Parametric

Knowledge Guiding (PKG) [84], equips LLMs

with a knowledge-guiding module that

accesses relevant knowledge at runtime

without modifying the model's parameters.

These advances in hallucination avoidance,

knowledge augmentation, grounding, and

continual learning contribute to improving

the reliability and accuracy of generated text

across domains and tasks.

Human-like Reasoning and Problem Solving

This trend focuses on enhancing the

reasoning ability of LLMs [90]–[97].

Researchers have introduced innovative

frameworks such as Tree of Thoughts

(ToT), which enable LLMs to explore and

strategically plan intermediate steps toward

problem-solving [98]. This approach

encourages LLMs to make deliberate

decisions, evaluate choices, and consider

multiple reasoning paths, rather than just

a single one. Another proposed method,

Self-Notes, allows LLMs to deviate from the

input context, enhancing context memory

LLM Strategy Guide

25

and enabling multi-step reasoning [99].

Additionally, OlaGPT introduces a framework

to simulate human cognitive abilities,

including attention, memory, reasoning, and

learning [100]. OlaGPT incorporates an active

learning mechanism to strengthen problem-

solving abilities by recording and referring

to previous mistakes and expert opinions.

These developments in reasoning abilities

pave the way for LLMs to tackle complex

problems more effectively, bridging the gaps

between their current capabilities and human

reasoning.

LLM-guided Articial General Intelligence

Researchers have also recently endeavored

to develop articial general intelligence

on top of LLMs [101]–[103]. Voyager, an

embodied lifelong learning agent powered

by LLMs, autonomously explores and acquires

skills in Minecraft without human intervention

[104]. It uses an automatic curriculum, an

ever-growing skill library, and an iterative

prompting mechanism to enhance its

abilities. Voyager demonstrates exceptional

prociency in Minecraft, outperforming

prior state-of-the-art methods on various

metrics. Another approach, LLMs as Tool

Makers (LATM), allows LLMs to create their

own reusable tools for problem-solving,

eliminating dependency on existing tools

[105]. LATM consists of two phases, tool

making and tool using, which together enable

LLMs to generate tools for different tasks

and achieve cost effectiveness. LATM has

been validated across complex reasoning

tasks. Additionally, Augmenting Autotelic

Agents with Large Language Models (LMA3)

introduces a language model augmented

autotelic agent that leverages a pre-trained

language model to represent, generate, and

learn diverse and abstract human-relevant

goals [106]. LMA3 demonstrates the ability

to learn a wide range of skills without hand-

coded goal representations or curricula in a

text-based environment. Such innovations

promote the development of articial

general intelligence by empowering LLMs to

autonomously acquire skills, create tools, and

pursue diverse goals.

appliedAI

26

In the landscape of LLMs, there are several

major closed-source (often proprietary)

models and a growing number of open-

source alternatives that offer powerful

capabilities for various natural language

processing tasks. These models have been

developed by leading industry players, open-

source developers, and research institutions,

and they continue to push the boundaries of

what LLMs can achieve. In this section, we

will explore some prominent closed-source

models and the growing area of open-source

alternatives.

Closed-source Models

Prior to GPT-3, most LLMs were openly

available. However, with GPT-3 and

similar models that excel in next word

prediction, there has been a shift towards

proprietary closed-source models. These

are predominantly developed by major

industry players such as OpenAI, Google, and

Microsoft. Table 1 presents a selected list of

these models.

ChatGPT is often considered a service

rather than a standalone model as it

incorporates GPT-3.5 or GPT-4 (for the Plus

version). Likewise, Google's experimental

conversational AI service Bard initially utilized

a lightweight and optimized version of LaMDA

(Language Model for Dialogue Application)

but later transitioned to a more advanced

language model called PaLM 2. Bing Chat,

powered by a customized version of OpenAI's

ChatGPT, integrates Microsoft's search

engine to deliver human-like conversational

responses and improve overall user

experience. Another commercial chatbot,

ERNIE Bot, is built upon Ernie 3.0-Titan. These

conversational AI services, not listed in Table

1, build upon proprietary closed-source

models to provide contextually relevant

conversations and deliver engaging user

experience.

Country Developer & Provider Model Parameters Release

US OpenAI GPT-3 175B Jun 2020

US OpenAI InstructGPT 1.3B, 6B, 175B Jan 2022

US OpenAI GPT-3.5 175B Mar 2022

US OpenAI GPT-4 Unknown Mar 2023

US Microsoft phi-1 1.3B Jun 2023

US Google LaMDA 137B May 2021

US Google GLaM 1.2T Dec 2021

US Google PaLM 540B Apr 2022

US Google PaLM-E 562B Mar 2023

US Google PaLM-2 340B May 2023

US/UK Google DeepMind Gopher 280B Dec 2021

US/UK Google DeepMind Chinchilla 70B Mar 2022

US Amazon AlexaTM 20B Aug 2022

US NVIDIA Megatron Turing NLG 530B Oct 2021

US Bloomberg BloombergGPT 50B Mar 2023

US Anthropic Claude 52B Dec 2021

US Anthropic Claude 2 Unknown Jul 2023

US Cohere Cohere Unknown Nov 2021

China

Baidu & Peng Cheng

Lab.

ERNIE 3.0 Titan 260B Dec 2021

China

Beijing Academy of

Articial Intelligence

Wu Dao 2.0 175B May 2021

China Huawei PanGu-Σ 1T Mar 2023

Israel AI21 Jurassic-1 178B Sept 2021

Israel AI21 Jurassic-2 Unknow Mar 2023

South Korea Naver Corp HyperCLOVA 204B May 2021

Germany Aleph Alpha Luminous 13B, 30B, 70B Nov 2021

Table 1. Selected list of closed-source models after 2020.

3.1.2. Major Closed-source Models and Open-source Alternatives

LLM Strategy Guide

27

Open-Source Alternatives

In the rst half of 2023 especially, there has

been a surge of open-source LLMs, paving

the way for fresh avenues of innovation and

collaboration. In the early stages of this surge,

the open-source landscape consisted mostly

of research-only models such as LLaMA,

Alpaca, and their subsequent iterations,

including Dolly 1.0, GPT4All, GALPACA,

Baize, Koala, Vicuna, LLaVA, WizardLM,

StableVicuna, ImageBind, etc. These models

allowed researchers to study and explore the

capabilities and potentials of LLMs (Figure 6

and Table 2).

Country Developer & Provider Model Parameters Release

US Meta AI OPT-175B 12M-175B May 2022

US Meta AI LLaMA 7B-65B Feb 2023

US Meta AI ImageBind Unknown May 2023

US, China Microsoft, Peking U. WizardLM 7B-65B Apr 2023

US Microsoft Orca 13B Jun 2023

US Stanford University Alpaca 7B Mar 2023

US

Georgia Tech Research

Institute

GALPACA 6.7B, 30B Apr 2023

US, China

University of California, San

Diego, Sun Yat-sen University,

Microsoft Research Asia

Baize 7B-30B Apr 2023

US UC Berkeley Koala 13B Apr 2023

US

University of Wisconsin-

Madison, Microsoft Research,

Columbia University

LLaVA 13B Apr 2023

US Databricks Dolly 1.0 6B Mar 2023

US Nomic AI GPT4All 7B-13B Mar 2023

US LMSYS Org Vicuna 13B Apr 2023

US CarperAI StableVicuna 13B Apr 2023

Singapore

National University of

Singapore

Goat 7B May 2023

France BigScience Bloom 176B Nov 2022

Various OpenOrca OpenOrca-Preview1-13B 13B Jul 2023

GPT-4

BloombergGPT

PanGu-Σ

Palm-E

Jurassic-2

PaLM 2 phi-1 Glaude 2

Alpaca

Alpaca-LoRA

LLaMA LLaVA

WizardLM

StableVicuna

Dolly 1.0

GPT4All

GALPACA

Baize

Koala

Vicuna

ImageBind Goat

Orca

Xgen-7B-8K-

Inst

OpenOrca-

Preview1-13B

CodeGen2.5-

7B-instruct

Flan-UL2

OpenChatKit

OpenAssistant

StableLM

MOSS

h2ogpt

FastChat-T5

Cerebras-

GPT

OpenLLaMA

OpenAlpaca

replit-code

StarCoder 15B

StarChat

Alpha

MPT-7B

RedPajama-

INCITE

Dlite V2

RWKV

Falcon-40B

CodeT5+

baichuan-7B

OpenLLaMA-

13B

MPT-30B

Xgen-7B-4K/

8K-Base

CodeGen2.5-

7B-mono/multi

Llama 2

StarCoder

1/3/7B

Closed-Source

Open Source

- Research

Open Source

- Commercial

Jul 15Jul 1Jun 15Jun 1May 15May 1Apr 15Apr 1Mar 15Mar 1Feb 15Feb 1

Figure 6. Major large language models released between February and July 2023

Table 2. Selected list of open-source non-commercial models.

appliedAI

28

Country Developer & Provider Model Parameters Release

US EleutherAI GPT-J-6B 6B Jun 2021

US EleutherAI GPT-NeoX-20B 20B Apr 2022

US Google UL2 20B May 2022

US Google Flan T5 80M-11B Oct 2022

US Google Flan UL2 20B Mar 2023

US Cerebras Cerebras-GPT 111M-13B Mar 2023

US Nomic AI GPT4All-J 6B Apr 2023

US EleutherAI Pythia 70M-12B Apr 2023

US Databricks Dolly 2.0 3B-12B Apr 2023

US H2O.ai h2oGPT 12B Apr 2023

US LMSYS Org FastChat-T5 3B Apr 2023

US AI Squared Dlite V2 124M-1.5B May 2023

US, Spain,

UK

RWKV Foundation, EleutherAI,

University of Barcelona, Charm

Therapeutics, Ohio State University

RWKV 169M-14B May 2023

US MosaicML MPT-7B, 30B 7B, 30B May-Jun 2023

US Together RedPajama-INCITE 3B, 7B May 2023

US OpenLM Research, Stability AI OpenLLaMA 3B, 7B, 13B May-Jun 2023

US Meta AI Llama 2 7B-70B Jul 2023

UK Stability AI StableLM-Alpha 3B-65B Apr 2023

Germany LAION AI

Open Assistant

(Pythia family)

12B Apr 2023

UAE Technology Innovation Institute Falcon 7B, 40B May 2023

China Baichuan baichuan-7B 7B Jun 2023

Table 3. Selected list of open-source large language models that allow potential commercial usage.

The open-source landscape has expanded

considerably since then, with many models

and datasets emerging that allow potential

commercial usage

1.

Notable among these

models are Cerebras-GPT, Pythia, Dolly

2.0, GPT4All-J, OpenAssistant, StableLM,